_This is a different approach to #20267, I took the liberty of adapting

some parts, see below_

## Context

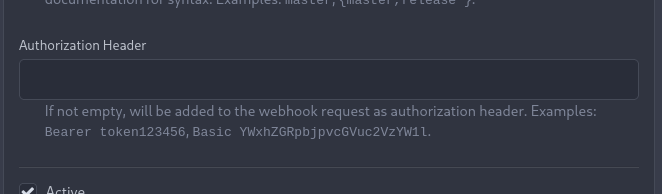

In some cases, a weebhook endpoint requires some kind of authentication.

The usual way is by sending a static `Authorization` header, with a

given token. For instance:

- Matrix expects a `Bearer <token>` (already implemented, by storing the

header cleartext in the metadata - which is buggy on retry #19872)

- TeamCity #18667

- Gitea instances #20267

- SourceHut https://man.sr.ht/graphql.md#authentication-strategies (this

is my actual personal need :)

## Proposed solution

Add a dedicated encrypt column to the webhook table (instead of storing

it as meta as proposed in #20267), so that it gets available for all

present and future hook types (especially the custom ones #19307).

This would also solve the buggy matrix retry #19872.

As a first step, I would recommend focusing on the backend logic and

improve the frontend at a later stage. For now the UI is a simple

`Authorization` field (which could be later customized with `Bearer` and

`Basic` switches):

The header name is hard-coded, since I couldn't fine any usecase

justifying otherwise.

## Questions

- What do you think of this approach? @justusbunsi @Gusted @silverwind

- ~~How are the migrations generated? Do I have to manually create a new

file, or is there a command for that?~~

- ~~I started adding it to the API: should I complete it or should I

drop it? (I don't know how much the API is actually used)~~

## Done as well:

- add a migration for the existing matrix webhooks and remove the

`Authorization` logic there

_Closes #19872_

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: Gusted <williamzijl7@hotmail.com>

Co-authored-by: delvh <dev.lh@web.de>

I found myself wondering whether a PR I scheduled for automerge was

actually merged. It was, but I didn't receive a mail notification for it

- that makes sense considering I am the doer and usually don't want to

receive such notifications. But ideally I want to receive a notification

when a PR was merged because I scheduled it for automerge.

This PR implements exactly that.

The implementation works, but I wonder if there's a way to avoid passing

the "This PR was automerged" state down so much. I tried solving this

via the database (checking if there's an automerge scheduled for this PR

when sending the notification) but that did not work reliably, probably

because sending the notification happens async and the entry might have

already been deleted. My implementation might be the most

straightforward but maybe not the most elegant.

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: Lauris BH <lauris@nix.lv>

Co-authored-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

GitHub allows releases with target commitish `refs/heads/BRANCH`, which

then causes issues in Gitea after migration. This fix handles cases that

a branch already has a prefix.

Fixes#20317

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

A bug was introduced in #17865 where filepath.Join is used to join

putative unadopted repository owner and names together. This is

incorrect as these names are then used as repository names - which shoud

have the '/' separator. This means that adoption will not work on

Windows servers.

Fix#21632

Signed-off-by: Andrew Thornton <art27@cantab.net>

The OAuth spec [defines two types of

client](https://datatracker.ietf.org/doc/html/rfc6749#section-2.1),

confidential and public. Previously Gitea assumed all clients to be

confidential.

> OAuth defines two client types, based on their ability to authenticate

securely with the authorization server (i.e., ability to

> maintain the confidentiality of their client credentials):

>

> confidential

> Clients capable of maintaining the confidentiality of their

credentials (e.g., client implemented on a secure server with

> restricted access to the client credentials), or capable of secure

client authentication using other means.

>

> **public

> Clients incapable of maintaining the confidentiality of their

credentials (e.g., clients executing on the device used by the resource

owner, such as an installed native application or a web browser-based

application), and incapable of secure client authentication via any

other means.**

>

> The client type designation is based on the authorization server's

definition of secure authentication and its acceptable exposure levels

of client credentials. The authorization server SHOULD NOT make

assumptions about the client type.

https://datatracker.ietf.org/doc/html/rfc8252#section-8.4

> Authorization servers MUST record the client type in the client

registration details in order to identify and process requests

accordingly.

Require PKCE for public clients:

https://datatracker.ietf.org/doc/html/rfc8252#section-8.1

> Authorization servers SHOULD reject authorization requests from native

apps that don't use PKCE by returning an error message

Fixes#21299

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Previously mentioning a user would link to its profile, regardless of

whether the user existed. This change tests if the user exists and only

if it does - a link to its profile is added.

* Fixes#3444

Signed-off-by: Yarden Shoham <hrsi88@gmail.com>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

When actions besides "delete" are performed on issues, the milestone

counter is updated. However, since deleting issues goes through a

different code path, the associated milestone's count wasn't being

updated, resulting in inaccurate counts until another issue in the same

milestone had a non-delete action performed on it.

I verified this change fixes the inaccurate counts using a local docker

build.

Fixes#21254

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

At the moment a repository reference is needed for webhooks. With the

upcoming package PR we need to send webhooks without a repository

reference. For example a package is uploaded to an organization. In

theory this enables the usage of webhooks for future user actions.

This PR removes the repository id from `HookTask` and changes how the

hooks are processed (see `services/webhook/deliver.go`). In a follow up

PR I want to remove the usage of the `UniqueQueue´ and replace it with a

normal queue because there is no reason to be unique.

Co-authored-by: 6543 <6543@obermui.de>

When a PR reviewer reviewed a file on a commit that was later gc'ed,

they would always get a `500` response from then on when loading the PR.

This PR simply ignores that error and instead marks all files as

unchanged.

This approach was chosen as the only feasible option without diving into

**a lot** of error handling.

Fixes#21392

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

A lot of our code is repeatedly testing if individual errors are

specific types of Not Exist errors. This is repetitative and unnecesary.

`Unwrap() error` provides a common way of labelling an error as a

NotExist error and we can/should use this.

This PR has chosen to use the common `io/fs` errors e.g.

`fs.ErrNotExist` for our errors. This is in some ways not completely

correct as these are not filesystem errors but it seems like a

reasonable thing to do and would allow us to simplify a lot of our code

to `errors.Is(err, fs.ErrNotExist)` instead of

`package.IsErr...NotExist(err)`

I am open to suggestions to use a different base error - perhaps

`models/db.ErrNotExist` if that would be felt to be better.

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: delvh <dev.lh@web.de>

For normal commits the notification url was wrong because oldCommitID is received from the shrinked commits list.

This PR moves the commits list shrinking after the oldCommitID assignment.

Fixes#21379

The commits are capped by `setting.UI.FeedMaxCommitNum` so

`len(commits)` is not the correct number. So this PR adds a new

`TotalCommits` field.

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

When merge was changed to run in the background context, the db updates

were still running in request context. This means that the merge could

be successful but the db not be updated.

This PR changes both these to run in the hammer context, this is not

complete rollback protection but it's much better.

Fix#21332

Signed-off-by: Andrew Thornton <art27@cantab.net>

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

We should only log CheckPath errors if they are not simply due to

context cancellation - and we should add a little more context to the

error message.

Fix#20709

Signed-off-by: Andrew Thornton <art27@cantab.net>

Close#20315 (fix the panic when parsing invalid input), Speed up #20231 (use ls-tree without size field)

Introduce ListEntriesRecursiveFast (ls-tree without size) and ListEntriesRecursiveWithSize (ls-tree with size)

Only load SECRET_KEY and INTERNAL_TOKEN if they exist.

Never write the config file if the keys do not exist, which was only a fallback for Gitea upgraded from < 1.5

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

This is useful in scenarios where the reverse proxy may have knowledge

of user emails, but does not know about usernames set on gitea,

as in the feature request in #19948.

I tested this by setting up a fresh gitea install with one user `mhl`

and email `m.hasnain.lakhani@gmail.com`. I then created a private repo,

and configured gitea to allow reverse proxy authentication.

Via curl I confirmed that these two requests now work and return 200s:

curl http://localhost:3000/mhl/private -I --header "X-Webauth-User: mhl"

curl http://localhost:3000/mhl/private -I --header "X-Webauth-Email: m.hasnain.lakhani@gmail.com"

Before this commit, the second request did not work.

I also verified that if I provide an invalid email or user,

a 404 is correctly returned as before

Closes#19948

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: 6543 <6543@obermui.de>

The behaviour of `PreventSurroundingPre` has changed in

https://github.com/alecthomas/chroma/pull/618 so that apparently it now

causes line wrapper tags to be no longer emitted, but we need some form

of indication to split the HTML into lines, so I did what

https://github.com/yuin/goldmark-highlighting/pull/33 did and added the

`nopWrapper`.

Maybe there are more elegant solutions but for some reason, just

splitting the HTML string on `\n` did not work.

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Both allow only limited characters. If you input more, you will get a error

message. So it make sense to limit the characters of the input fields.

Slightly relax the MaxSize of repo's Description and Website

If you are create a new new branch while viewing file or directory, you

get redirected to the root of the repo. With this PR, you keep your

current path instead of getting redirected to the repo root.

The `go-licenses` make task introduced in #21034 is being run on make vendor

and occasionally causes an empty go-licenses file if the vendors need to

change. This should be moved to the generate task as it is a generated file.

Now because of this change we also need to split generation into two separate

steps:

1. `generate-backend`

2. `generate-frontend`

In the future it would probably be useful to make `generate-swagger` part of `generate-frontend` but it's not tolerated with our .drone.yml

Ref #21034

Signed-off-by: Andrew Thornton <art27@cantab.net>

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: delvh <dev.lh@web.de>

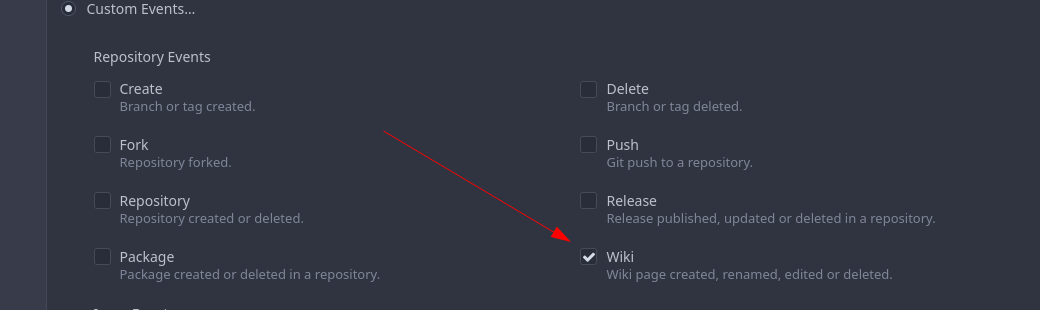

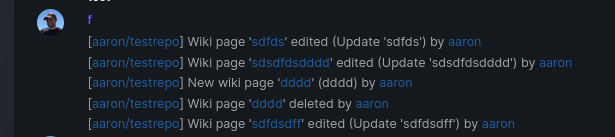

Add support for triggering webhook notifications on wiki changes.

This PR contains frontend and backend for webhook notifications on wiki actions (create a new page, rename a page, edit a page and delete a page). The frontend got a new checkbox under the Custom Event -> Repository Events section. There is only one checkbox for create/edit/rename/delete actions, because it makes no sense to separate it and others like releases or packages follow the same schema.

The actions itself are separated, so that different notifications will be executed (with the "action" field). All the webhook receivers implement the new interface method (Wiki) and the corresponding tests.

When implementing this, I encounter a little bug on editing a wiki page. Creating and editing a wiki page is technically the same action and will be handled by the ```updateWikiPage``` function. But the function need to know if it is a new wiki page or just a change. This distinction is done by the ```action``` parameter, but this will not be sent by the frontend (on form submit). This PR will fix this by adding the ```action``` parameter with the values ```_new``` or ```_edit```, which will be used by the ```updateWikiPage``` function.

I've done integration tests with matrix and gitea (http).

Fix#16457

Signed-off-by: Aaron Fischer <mail@aaron-fischer.net>

A testing cleanup.

This pull request replaces `os.MkdirTemp` with `t.TempDir`. We can use the `T.TempDir` function from the `testing` package to create temporary directory. The directory created by `T.TempDir` is automatically removed when the test and all its subtests complete.

This saves us at least 2 lines (error check, and cleanup) on every instance, or in some cases adds cleanup that we forgot.

Reference: https://pkg.go.dev/testing#T.TempDir

```go

func TestFoo(t *testing.T) {

// before

tmpDir, err := os.MkdirTemp("", "")

require.NoError(t, err)

defer os.RemoveAll(tmpDir)

// now

tmpDir := t.TempDir()

}

```

Signed-off-by: Eng Zer Jun <engzerjun@gmail.com>

When migrating add several more important sanity checks:

* SHAs must be SHAs

* Refs must be valid Refs

* URLs must be reasonable

Signed-off-by: Andrew Thornton <art27@cantab.net>

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: techknowlogick <matti@mdranta.net>

There are a lot of go dependencies that appear old and we should update them.

The following packages have been updated:

* codeberg.org/gusted/mcaptcha

* github.com/markbates/goth

* github.com/buildkite/terminal-to-html

* github.com/caddyserver/certmagic

* github.com/denisenkom/go-mssqldb

* github.com/duo-labs/webauthn

* github.com/editorconfig/editorconfig-core-go/v2

* github.com/felixge/fgprof

* github.com/gliderlabs/ssh

* github.com/go-ap/activitypub

* github.com/go-git/go-git/v5

* github.com/go-ldap/ldap/v3

* github.com/go-swagger/go-swagger

* github.com/go-testfixtures/testfixtures/v3

* github.com/golang-jwt/jwt/v4

* github.com/klauspost/compress

* github.com/lib/pq

* gitea.com/lunny/dingtalk_webhook - instead of github.com

* github.com/mattn/go-sqlite3

* github/matn/go-isatty

* github.com/minio/minio-go/v7

* github.com/niklasfasching/go-org

* github.com/prometheus/client_golang

* github.com/stretchr/testify

* github.com/unrolled/render

* github.com/xanzy/go-gitlab

* gopkg.in/ini.v1

Signed-off-by: Andrew Thornton <art27@cantab.net>

* fix hard-coded timeout and error panic in API archive download endpoint

This commit updates the `GET /api/v1/repos/{owner}/{repo}/archive/{archive}`

endpoint which prior to this PR had a couple of issues.

1. The endpoint had a hard-coded 20s timeout for the archiver to complete after

which a 500 (Internal Server Error) was returned to client. For a scripted

API client there was no clear way of telling that the operation timed out and

that it should retry.

2. Whenever the timeout _did occur_, the code used to panic. This was caused by

the API endpoint "delegating" to the same call path as the web, which uses a

slightly different way of reporting errors (HTML rather than JSON for

example).

More specifically, `api/v1/repo/file.go#GetArchive` just called through to

`web/repo/repo.go#Download`, which expects the `Context` to have a `Render`

field set, but which is `nil` for API calls. Hence, a `nil` pointer error.

The code addresses (1) by dropping the hard-coded timeout. Instead, any

timeout/cancelation on the incoming `Context` is used.

The code addresses (2) by updating the API endpoint to use a separate call path

for the API-triggered archive download. This avoids producing HTML-errors on

errors (it now produces JSON errors).

Signed-off-by: Peter Gardfjäll <peter.gardfjall.work@gmail.com>

The recovery, API, Web and package frameworks all create their own HTML

Renderers. This increases the memory requirements of Gitea

unnecessarily with duplicate templates being kept in memory.

Further the reloading framework in dev mode for these involves locking

and recompiling all of the templates on each load. This will potentially

hide concurrency issues and it is inefficient.

This PR stores the templates renderer in the context and stores this

context in the NormalRoutes, it then creates a fsnotify.Watcher

framework to watch files.

The watching framework is then extended to the mailer templates which

were previously not being reloaded in dev.

Then the locales are simplified to a similar structure.

Fix#20210Fix#20211Fix#20217

Signed-off-by: Andrew Thornton <art27@cantab.net>

Some Migration Downloaders provide re-writing of CloneURLs that may point to

unallowed urls. Recheck after the CloneURL is rewritten.

Signed-off-by: Andrew Thornton <art27@cantab.net>

Some repositories do not have the PullRequest unit present in their configuration

and unfortunately the way that IsUserAllowedToUpdate currently works assumes

that this is an error instead of just returning false.

This PR simply swallows this error allowing the function to return false.

Fix#20621

Signed-off-by: Andrew Thornton <art27@cantab.net>

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: Lauris BH <lauris@nix.lv>

This adds support for getting the user's full name from the reverse

proxy in addition to username and email.

Tested locally with caddy serving as reverse proxy with Tailscale

authentication.

Signed-off-by: Will Norris <will@tailscale.com>

Signed-off-by: Will Norris <will@tailscale.com>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

This PR rewrites the invisible unicode detection algorithm to more

closely match that of the Monaco editor on the system. It provides a

technique for detecting ambiguous characters and relaxes the detection

of combining marks.

Control characters are in addition detected as invisible in this

implementation whereas they are not on monaco but this is related to

font issues.

Close#19913

Signed-off-by: Andrew Thornton <art27@cantab.net>

Generating repositories from a template is done inside a transaction.

Manual rollback on error is not needed and it always results in error

"repository does not exist".

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

This bug affects tests which are sending emails (#20307). Some tests reinitialise the web routes (like `TestNodeinfo`) which messed up the mail templates. There is no reason why the templates should be loaded in the routes method.

* `PROTOCOL`: can be smtp, smtps, smtp+startls, smtp+unix, sendmail, dummy

* `SMTP_ADDR`: domain for SMTP, or path to unix socket

* `SMTP_PORT`: port for SMTP; defaults to 25 for `smtp`, 465 for `smtps`, and 587 for `smtp+startls`

* `ENABLE_HELO`, `HELO_HOSTNAME`: reverse `DISABLE_HELO` to `ENABLE_HELO`; default to false + system hostname

* `FORCE_TRUST_SERVER_CERT`: replace the unclear `SKIP_VERIFY`

* `CLIENT_CERT_FILE`, `CLIENT_KEY_FILE`, `USE_CLIENT_CERT`: clarify client certificates here

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

- Add a new push mirror to specific repository

- Sync now ( send all the changes to the configured push mirrors )

- Get list of all push mirrors of a repository

- Get a push mirror by ID

- Delete push mirror by ID

Signed-off-by: Mohamed Sekour <mohamed.sekour@exfo.com>

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: zeripath <art27@cantab.net>

* Add latest commit's SHA to content response

- When requesting the contents of a filepath, add the latest commit's

SHA to the requested file.

- Resolves#12840

* Add swagger

* Fix NPE

* Fix tests

* Hook into LastCommitCache

* Move AddLastCommitCache to a common nogogit and gogit file

Signed-off-by: Andrew Thornton <art27@cantab.net>

* Prevent NPE

Co-authored-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

There is a subtle bug in the code relating to collating the results of

`git ls-files -u -z` in `unmergedFiles()`. The code here makes the

mistake of assuming that every unmerged file will always have a stage 1

conflict, and this results in conflicts that occur in stage 3 only being

dropped.

This PR simply adjusts this code to ensure that any empty unmergedFile

will always be passed down the channel.

The PR also adds a lot of Trace commands to attempt to help find future

bugs in this code.

Fix#19527

Signed-off-by: Andrew Thornton <art27@cantab.net>

Sometimes users want to receive email notifications of messages they create or reply to,

Added an option to personal preferences to allow users to choose

Closes#20149

The LastCommitCache code is a little complex and there is unnecessary

duplication between the gogit and nogogit variants.

This PR adds the LastCommitCache as a field to the git.Repository and

pre-creates it in the ReferencesGit helpers etc. There has been some

simplification and unification of the variant code.

Signed-off-by: Andrew Thornton <art27@cantab.net>

Use Unicode placeholders to replace HTML tags and HTML entities first, then do diff, then recover the HTML tags and HTML entities. Now the code diff with highlight has stable behavior, and won't emit broken tags.

* Fixes issue #19603 (Not able to merge commit in PR when branches content is same, but different commit id)

* fill HeadCommitID in PullRequest

* compare real commits ID as check for merging

* based on @zeripath patch in #19738

Support synchronizing with the push mirrors whenever new commits are pushed or synced from pull mirror.

Related Issues: #18220

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: zeripath <art27@cantab.net>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

The uid provided to the group filter must be properly escaped using the provided

ldap.EscapeFilter function.

Fix#20181

Signed-off-by: Andrew Thornton <art27@cantab.net>

* Check if project has the same repository id with issue when assign project to issue

* Check if issue's repository id match project's repository id

* Add more permission checking

* Remove invalid argument

* Fix errors

* Add generic check

* Remove duplicated check

* Return error + add check for new issues

* Apply suggestions from code review

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

Co-authored-by: Gusted <williamzijl7@hotmail.com>

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

Co-authored-by: 6543 <6543@obermui.de>

* Refactor `i18n` to `locale`

- Currently we're using the `i18n` variable naming for the `locale`

struct. This contains locale's specific information and cannot be used

for general i18n purpose, therefore refactoring it to `locale` makes

more sense.

- Ref: https://github.com/go-gitea/gitea/pull/20096#discussion_r906699200

* Update routers/install/install.go

* Prototyping

* Start work on creating offsets

* Modify tests

* Start prototyping with actual MPH

* Twiddle around

* Twiddle around comments

* Convert templates

* Fix external languages

* Fix latest translation

* Fix some test

* Tidy up code

* Use simple map

* go mod tidy

* Move back to data structure

- Uses less memory by creating for each language a map.

* Apply suggestions from code review

Co-authored-by: delvh <dev.lh@web.de>

* Add some comments

* Fix tests

* Try to fix tests

* Use en-US as defacto fallback

* Use correct slices

* refactor (#4)

* Remove TryTr, add log for missing translation key

* Refactor i18n

- Separate dev and production locale stores.

- Allow for live-reloading in dev mode.

Co-authored-by: zeripath <art27@cantab.net>

* Fix live-reloading & check for errors

* Make linter happy

* live-reload with periodic check (#5)

* Fix tests

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: 6543 <6543@obermui.de>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Co-authored-by: zeripath <art27@cantab.net>

When migrating git repositories we should ensure that the commit-graph is written.

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: delvh <dev.lh@web.de>

* clean git support for ver < 2.0

* fine tune tests for markup (which requires git module)

* remove unnecessary comments

* try to fix tests

* try test again

* use const for GitVersionRequired instead of var

* try to fix integration test

* Refactor CheckAttributeReader to make a *git.Repository version

* update document for commit signing with Gitea's internal gitconfig

* update document for commit signing with Gitea's internal gitconfig

Co-authored-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

- Always give a best-effort to fetching the repositories, if even that

fails indeed give a disconnected mirror found error.

- *Partially* resolves#19928

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

* Fix cli command restore-repo: "units" should be parsed as StringSlice because after #15790 it's read by c.StringSlice("units"). Before, the "units" were processed by strings.Split

* Add checking for invalid unit names

Co-authored-by: 6543 <6543@obermui.de>

* Move access and repo permission to models/perm/access

* fix test

* fix git test

* Move functions sequence

* Some improvements per @KN4CK3R and @delvh

* Move issues related code to models/issues

* Move some issues related sub package

* Merge

* Fix test

* Fix test

* Fix test

* Fix test

* Rename some files

* Move access and repo permission to models/perm/access

* fix test

* Move some git related files into sub package models/git

* Fix build

* fix git test

* move lfs to sub package

* move more git related functions to models/git

* Move functions sequence

* Some improvements per @KN4CK3R and @delvh

A pr.Reviewer may be nil when migrating from Gitea if this is a team

request review.

We do not migrate teams therefore we cannot map these requests, but we can

migrate user requests.

Signed-off-by: Andrew Thornton <art27@cantab.net>

* Move some repository related code into sub package

* Move more repository functions out of models

* Fix lint

* Some performance optimization for webhooks and others

* some refactors

* Fix lint

* Fix

* Update modules/repository/delete.go

Co-authored-by: delvh <dev.lh@web.de>

* Fix test

* Merge

* Fix test

* Fix test

* Fix test

* Fix test

Co-authored-by: delvh <dev.lh@web.de>

Fixes#12338

This allows use to talk to the API with our ssh certificate (and/or ssh-agent) without needing to fetch an API key or tokens.

It will just automatically work when users have added their ssh principal in gitea.

This needs client code in tea

Update: also support normal pubkeys

ref: https://tools.ietf.org/html/draft-cavage-http-signatures

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: zeripath <art27@cantab.net>

Signed-off-by: Andrew Thornton <art27@cantab.net>

When Gitea is running as PID 1 git will occassionally orphan child processes leading

to (defunct) processes. This PR simply sets Setpgid to true on these child processes

meaning that these defunct processes will also be correctly reaped.

Fix#19077

Signed-off-by: Andrew Thornton <art27@cantab.net>

A `repo_model.Mirror` repository field (`.Repo`) will not automatically

be set, but is used without checking in mirror_pull.go:UpdateAddress.

This will cause an NPE.

This PR changes UpdateAddress to use the helper function GetRepository()

helping prevent future NPEs but also changes modules/context/repo.go to

ensure that the Mirror.Repo is set.

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: techknowlogick <techknowlogick@gitea.io>

* Make Ctrl+Enter (quick submit) work for issue comment and wiki editor

* Remove the required `SubmitReviewForm.Type`, empty type (triggered by quick submit) means "comment"

* Merge duplicate code

* Use a better OlderThan for DeleteInactiveUsers

- Currently the OlderThan is zero, for instances that enable or run this

task this could actually delete just new users that still need to

confirm their email. This patch fixes that by setting the default to the

`ActiveCodeLives` setting, which corresponds to the amount of time that

a user can active their account, thus avoiding the issue of deleting

unactivated email users.

* Use correct duration

* Update go tool dependencies

Updated all tool dependencies to latest tags, hoping CI will like it.

* fix new lint errors

* handle more strings.Title cases

* remove lint skip

* Fix indention

Signed-off-by: kolaente <k@knt.li>

* Add option to merge a pr right now without waiting for the checks to succeed

Signed-off-by: kolaente <k@knt.li>

* Fix lint

Signed-off-by: kolaente <k@knt.li>

* Add scheduled pr merge to tables used for testing

Signed-off-by: kolaente <k@knt.li>

* Add status param to make GetPullRequestByHeadBranch reusable

Signed-off-by: kolaente <k@knt.li>

* Move "Merge now" to a seperate button to make the ui clearer

Signed-off-by: kolaente <k@knt.li>

* Update models/scheduled_pull_request_merge.go

Co-authored-by: 赵智超 <1012112796@qq.com>

* Update web_src/js/index.js

Co-authored-by: 赵智超 <1012112796@qq.com>

* Update web_src/js/index.js

Co-authored-by: 赵智超 <1012112796@qq.com>

* Re-add migration after merge

* Fix frontend lint

* Fix version compare

* Add vendored dependencies

* Add basic tets

* Make sure the api route is capable of scheduling PRs for merging

* Fix comparing version

* make vendor

* adopt refactor

* apply suggestion: User -> Doer

* init var once

* Fix Test

* Update templates/repo/issue/view_content/comments.tmpl

* adopt

* nits

* next

* code format

* lint

* use same name schema; rm CreateUnScheduledPRToAutoMergeComment

* API: can not create schedule twice

* Add TestGetBranchNamesForSha

* nits

* new go routine for each pull to merge

* Update models/pull.go

Co-authored-by: a1012112796 <1012112796@qq.com>

* Update models/scheduled_pull_request_merge.go

Co-authored-by: a1012112796 <1012112796@qq.com>

* fix & add renaming sugestions

* Update services/automerge/pull_auto_merge.go

Co-authored-by: a1012112796 <1012112796@qq.com>

* fix conflict relicts

* apply latest refactors

* fix: migration after merge

* Update models/error.go

Co-authored-by: delvh <dev.lh@web.de>

* Update options/locale/locale_en-US.ini

Co-authored-by: delvh <dev.lh@web.de>

* Update options/locale/locale_en-US.ini

Co-authored-by: delvh <dev.lh@web.de>

* adapt latest refactors

* fix test

* use more context

* skip potential edgecases

* document func usage

* GetBranchNamesForSha() -> GetRefsBySha()

* start refactoring

* ajust to new changes

* nit

* docu nit

* the great check move

* move checks for branchprotection into own package

* resolve todo now ...

* move & rename

* unexport if posible

* fix

* check if merge is allowed before merge on scheduled pull

* debugg

* wording

* improve SetDefaults & nits

* NotAllowedToMerge -> DisallowedToMerge

* fix test

* merge files

* use package "errors"

* merge files

* add string names

* other implementation for gogit

* adapt refactor

* more context for models/pull.go

* GetUserRepoPermission use context

* more ctx

* use context for loading pull head/base-repo

* more ctx

* more ctx

* models.LoadIssueCtx()

* models.LoadIssueCtx()

* Handle pull_service.Merge in one DB transaction

* add TODOs

* next

* next

* next

* more ctx

* more ctx

* Start refactoring structure of old pull code ...

* move code into new packages

* shorter names ... and finish **restructure**

* Update models/branches.go

Co-authored-by: zeripath <art27@cantab.net>

* finish UpdateProtectBranch

* more and fix

* update datum

* template: use "svg" helper

* rename prQueue 2 prPatchCheckerQueue

* handle automerge in queue

* lock pull on git&db actions ...

* lock pull on git&db actions ...

* add TODO notes

* the regex

* transaction in tests

* GetRepositoryByIDCtx

* shorter table name and lint fix

* close transaction bevore notify

* Update models/pull.go

* next

* CheckPullMergable check all branch protections!

* Update routers/web/repo/pull.go

* CheckPullMergable check all branch protections!

* Revert "PullService lock via pullID (#19520)" (for now...)

This reverts commit 6cde7c9159a5ea75a10356feb7b8c7ad4c434a9a.

* Update services/pull/check.go

* Use for a repo action one database transaction

* Apply suggestions from code review

* Apply suggestions from code review

Co-authored-by: delvh <dev.lh@web.de>

* Update services/issue/status.go

Co-authored-by: delvh <dev.lh@web.de>

* Update services/issue/status.go

Co-authored-by: delvh <dev.lh@web.de>

* use db.WithTx()

* gofmt

* make pr.GetDefaultMergeMessage() context aware

* make MergePullRequestForm.SetDefaults context aware

* use db.WithTx()

* pull.SetMerged only with context

* fix deadlock in `test-sqlite\#TestAPIBranchProtection`

* dont forget templates

* db.WithTx allow to set the parentCtx

* handle db transaction in service packages but not router

* issue_service.ChangeStatus just had caused another deadlock :/

it has to do something with how notification package is handled

* if we merge a pull in one database transaktion, we get a lock, because merge infoce internal api that cant handle open db sessions to the same repo

* ajust to current master

* Apply suggestions from code review

Co-authored-by: delvh <dev.lh@web.de>

* dont open db transaction in router

* make generate-swagger

* one _success less

* wording nit

* rm

* adapt

* remove not needed test files

* rm less diff & use attr in JS

* ...

* Update services/repository/files/commit.go

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

* ajust db schema for PullAutoMerge

* skip broken pull refs

* more context in error messages

* remove webUI part for another pull

* remove more WebUI only parts

* API: add CancleAutoMergePR

* Apply suggestions from code review

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

* fix lint

* Apply suggestions from code review

* cancle -> cancel

Co-authored-by: delvh <dev.lh@web.de>

* change queue identifyer

* fix swagger

* prevent nil issue

* fix and dont drop error

* as per @zeripath

* Update integrations/git_test.go

Co-authored-by: delvh <dev.lh@web.de>

* Update integrations/git_test.go

Co-authored-by: delvh <dev.lh@web.de>

* more declarative integration tests (dedup code)

* use assert.False/True helper

Co-authored-by: 赵智超 <1012112796@qq.com>

Co-authored-by: 6543 <6543@obermui.de>

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: zeripath <art27@cantab.net>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

In case the binded user can not access its own attributes.

Signed-off-by: Gwilherm Folliot <gwilherm55fo@gmail.com>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

- dont overwrite err with nil unintentionaly

- rename CheckPRReadyToMerge to CheckPullBranchProtections

- rename prQueue to prPatchCheckerQueue

from #9307

Co-authored-by: delvh <dev.lh@web.de>

* Apply DefaultUserIsRestricted in CreateUser

* Enforce system defaults in CreateUser

Allow for overwrites with CreateUserOverwriteOptions

* Fix compilation errors

* Add "restricted" option to create user command

* Add "restricted" option to create user admin api

* Respect default setting.Service.RegisterEmailConfirm and setting.Service.RegisterManualConfirm where needed

* Revert "Respect default setting.Service.RegisterEmailConfirm and setting.Service.RegisterManualConfirm where needed"

This reverts commit ee95d3e8dc9e9fff4fa66a5111e4d3930280e033.

Adds a feature [like GitHub has](https://docs.github.com/en/pull-requests/collaborating-with-pull-requests/proposing-changes-to-your-work-with-pull-requests/creating-a-pull-request-from-a-fork) (step 7).

If you create a new PR from a forked repo, you can select (and change later, but only if you are the PR creator/poster) the "Allow edits from maintainers" option.

Then users with write access to the base branch get more permissions on this branch:

* use the update pull request button

* push directly from the command line (`git push`)

* edit/delete/upload files via web UI

* use related API endpoints

You can't merge PRs to this branch with this enabled, you'll need "full" code write permissions.

This feature has a pretty big impact on the permission system. I might forgot changing some things or didn't find security vulnerabilities. In this case, please leave a review or comment on this PR.

Closes#17728

Co-authored-by: 6543 <6543@obermui.de>

Within doArchive there is a service goroutine that performs the

archiving function. This goroutine reports its error using a `chan

error` called `done`. Prior to this PR this channel had 0 capacity

meaning that the goroutine would block until the `done` channel was

cleared - however there are a couple of ways in which this channel might

not be read.

The simplest solution is to add a single space of capacity to the

goroutine which will mean that the goroutine will always complete and

even if the `done` channel is not read it will be simply garbage

collected away.

(The PR also contains two other places when setting up the indexers

which do not leak but where the blocking of the sending goroutine is

also unnecessary and so we should just add a small amount of capacity

and let the sending goroutine complete as soon as it can.)

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: 6543 <6543@obermui.de>

This gets the necessary data to the issuelist for it to support a clickable commit status icon which pops up the full list of commit statuses related to the commit. It accomplishes this without any additional queries or fetching as the existing codepath was already doing the necessary work but only returning the "last" status. All methods were wrapped to call the least-filtered version of each function in order to maximize code reuse.

Note that I originally left `getLastCommitStatus()` in `pull.go` which called to the new function, but `make lint` complained that it was unused, so I removed it. I would have preferred to keep it, but alas.

The only thing I'd still like to do here is force these popups to happen to the right by default instead of the left. I see that the only other place this is popping up right is on view_list.tmpl, but I can't figure out how/why right now.

Fixes#18810

* Set correct PR status on 3way on conflict checking

- When 3-way merge is enabled for conflict checking, it has a new

interesting behavior that it doesn't return any error when it found a

conflict, so we change the condition to not check for the error, but

instead check if conflictedfiles is populated, this fixes a issue

whereby PR status wasn't correctly on conflicted PR's.

- Refactor the mergeable property(which was incorrectly set and lead me this

bug) to be more maintainable.

- Add a dedicated test for conflicting checking, so it should prevent

future issues with this.

* Fix linter

* Don't allow merging PR's which are being conflict checked

- When a PR is still being conflict checked, don't allow the PR to be

merged(the merge button could already be visible before e.g. a new

commit was pushed to the PR).

- Relevant(should prevent such issue from happening) #19352

Co-authored-by: delvh <dev.lh@web.de>

Remove two unmaintained vendor packages `i18n` and `paginater`. Changes:

* Rewrite `i18n` package with a more clear fallback mechanism. Fix an unstable `Tr` behavior, add more tests.

* Refactor the legacy `Paginater` to `Paginator`, test cases are kept unchanged.

Trivial enhancement (no breaking for end users):

* Use the first locale in LANGS setting option as the default, add a log to prevent from surprising users.

Follows: #19284

* The `CopyDir` is only used inside test code

* Rewrite `ToSnakeCase` with more test cases

* The `RedisCacher` only put strings into cache, here we use internal `toStr` to replace the legacy `ToStr`

* The `UniqueQueue` can use string as ID directly, no need to call `ToStr`

Follows #19266, #8553, Close#18553, now there are only three `Run..(&RunOpts{})` functions.

* before: `stdout, err := RunInDir(path)`

* now: `stdout, _, err := RunStdString(&git.RunOpts{Dir:path})`

Continues on from #19202.

Following the addition of pprof labels we can now more easily understand the relationship between a goroutine and the requests that spawn them.

This PR takes advantage of the labels and adds a few others, then provides a mechanism for the monitoring page to query the pprof goroutine profile.

The binary profile that results from this profile is immediately piped in to the google library for parsing this and then stack traces are formed for the goroutines.

If the goroutine is within a context or has been created from a goroutine within a process context it will acquire the process description labels for that process.

The goroutines are mapped with there associate pids and any that do not have an associated pid are placed in a group at the bottom as unbound.

In this way we should be able to more easily examine goroutines that have been stuck.

A manager command `gitea manager processes` is also provided that can export the processes (with or without stacktraces) to the command line.

Signed-off-by: Andrew Thornton <art27@cantab.net>