The method can't be called with an outer transaction because if the user

is not a collaborator the outer transaction will be rolled back even if

the inner transaction uses the no-error path.

`has == 0` leads to `return nil` which cancels the transaction. A

standalone call of this method does nothing but if used with an outer

transaction, that will be canceled.

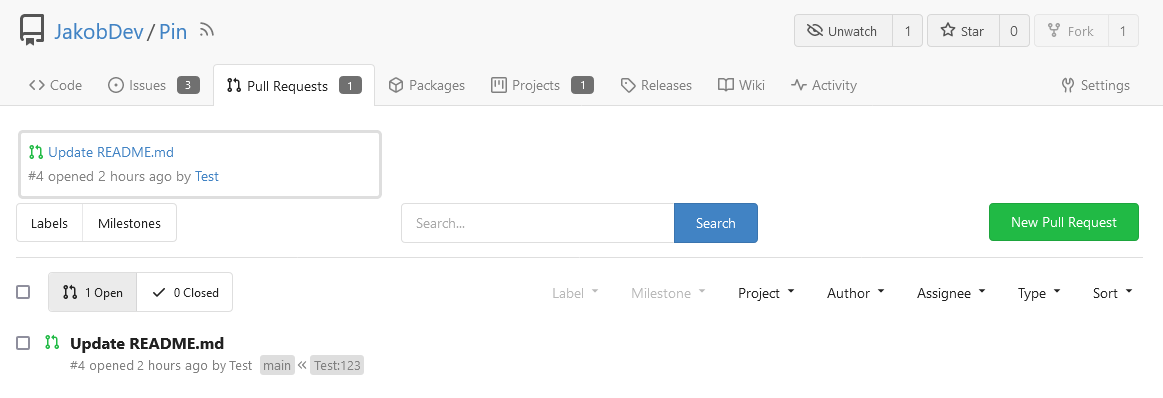

Sometimes you need to work on a feature which depends on another (unmerged) feature.

In this case, you may create a PR based on that feature instead of the main branch.

Currently, such PRs will be closed without the possibility to reopen in case the parent feature is merged and its branch is deleted.

Automatic target branch change make life a lot easier in such cases.

Github and Bitbucket behave in such way.

Example:

$PR_1$: main <- feature1

$PR_2$: feature1 <- feature2

Currently, merging $PR_1$ and deleting its branch leads to $PR_2$ being closed without the possibility to reopen.

This is both annoying and loses the review history when you open a new PR.

With this change, $PR_2$ will change its target branch to main ($PR_2$: main <- feature2) after $PR_1$ has been merged and its branch has been deleted.

This behavior is enabled by default but can be disabled.

For security reasons, this target branch change will not be executed when merging PRs targeting another repo.

Fixes#27062Fixes#18408

---------

Co-authored-by: Denys Konovalov <kontakt@denyskon.de>

Co-authored-by: delvh <dev.lh@web.de>

Fixes#22236

---

Error occurring currently while trying to revert commit using read-tree

-m approach:

> 2022/12/26 16:04:43 ...rvices/pull/patch.go:240:AttemptThreeWayMerge()

[E] [63a9c61a] Unable to run read-tree -m! Error: exit status 128 -

fatal: this operation must be run in a work tree

> - fatal: this operation must be run in a work tree

We need to clone a non-bare repository for `git read-tree -m` to work.

bb371aee6e

adds support to create a non-bare cloned temporary upload repository.

After cloning a non-bare temporary upload repository, we [set default

index](https://github.com/go-gitea/gitea/blob/main/services/repository/files/cherry_pick.go#L37)

(`git read-tree HEAD`).

This operation ends up resetting the git index file (see investigation

details below), due to which, we need to call `git update-index

--refresh` afterward.

Here's the diff of the index file before and after we execute

SetDefaultIndex: https://www.diffchecker.com/hyOP3eJy/

Notice the **ctime**, **mtime** are set to 0 after SetDefaultIndex.

You can reproduce the same behavior using these steps:

```bash

$ git clone https://try.gitea.io/me-heer/test.git -s -b main

$ cd test

$ git read-tree HEAD

$ git read-tree -m 1f085d7ed8 1f085d7ed8 9933caed00

error: Entry '1' not uptodate. Cannot merge.

```

After which, we can fix like this:

```

$ git update-index --refresh

$ git read-tree -m 1f085d7ed8 1f085d7ed8 9933caed00

```

As more and more options can be set for creating the repository, I don't

think we should put all of them into the creation web page which will

make things look complicated and confusing.

And I think we need some rules about how to decide which should/should

not be put in creating a repository page. One rule I can imagine is if

this option can be changed later and it's not a MUST on the creation,

then it can be removed on the page. So I found trust model is the first

one.

This PR removed the trust model selections on creating a repository web

page and kept others as before.

This is also a preparation for #23894 which will add a choice about SHA1

or SHA256 that cannot be changed once the repository created.

Fixes#26548

This PR refactors the rendering of markup links. The old code uses

`strings.Replace` to change some urls while the new code uses more

context to decide which link should be generated.

The added tests should ensure the same output for the old and new

behaviour (besides the bug).

We may need to refactor the rendering a bit more to make it clear how

the different helper methods render the input string. There are lots of

options (resolve links / images / mentions / git hashes / emojis / ...)

but you don't really know what helper uses which options. For example,

we currently support images in the user description which should not be

allowed I think:

<details>

<summary>Profile</summary>

https://try.gitea.io/KN4CK3R

</details>

---------

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Fixes#27114.

* In Gitea 1.12 (#9532), a "dismiss stale approvals" branch protection

setting was introduced, for ignoring stale reviews when verifying the

approval count of a pull request.

* In Gitea 1.14 (#12674), the "dismiss review" feature was added.

* This caused confusion with users (#25858), as "dismiss" now means 2

different things.

* In Gitea 1.20 (#25882), the behavior of the "dismiss stale approvals"

branch protection was modified to actually dismiss the stale review.

For some users this new behavior of dismissing the stale reviews is not

desirable.

So this PR reintroduces the old behavior as a new "ignore stale

approvals" branch protection setting.

---------

Co-authored-by: delvh <dev.lh@web.de>

Fix#28157

This PR fix the possible bugs about actions schedule.

## The Changes

- Move `UpdateRepositoryUnit` and `SetRepoDefaultBranch` from models to

service layer

- Remove schedules plan from database and cancel waiting & running

schedules tasks in this repository when actions unit has been disabled

or global disabled.

- Remove schedules plan from database and cancel waiting & running

schedules tasks in this repository when default branch changed.

- If there's a error with the Git command in `checkIfPRContentChanged`

the stderr wasn't concatendated to the error, which results in still not

knowing why an error happend.

- Adds concatenation for stderr to the returned error.

- Ref: https://codeberg.org/forgejo/forgejo/issues/2077

Co-authored-by: Gusted <postmaster@gusted.xyz>

I noticed the `BuildAllRepositoryFiles` function under the Alpine folder

is unused and I thought it was a bug.

But I'm not sure about this. Was it on purpose?

#28361 introduced `syncBranchToDB` in `CreateNewBranchFromCommit`. This

PR will revert the change because it's unnecessary. Every push will

already be checked by `syncBranchToDB`.

This PR also created a test to ensure it's right.

Introduce the new generic deletion methods

- `func DeleteByID[T any](ctx context.Context, id int64) (int64, error)`

- `func DeleteByIDs[T any](ctx context.Context, ids ...int64) error`

- `func Delete[T any](ctx context.Context, opts FindOptions) (int64,

error)`

So, we no longer need any specific deletion method and can just use

the generic ones instead.

Replacement of #28450Closes#28450

---------

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Using the Go Official tool `golang.org/x/tools/cmd/deadcode@latest`

mentioned by [go blog](https://go.dev/blog/deadcode).

Just use `deadcode .` in the project root folder and it gives a list of

unused functions. Though it has some false alarms.

This PR removes dead code detected in `models/issues`.

Nowadays, cache will be used on almost everywhere of Gitea and it cannot

be disabled, otherwise some features will become unaviable.

Then I think we can just remove the option for cache enable. That means

cache cannot be disabled.

But of course, we can still use cache configuration to set how should

Gitea use the cache.

The 4 functions are duplicated, especially as interface methods. I think

we just need to keep `MustID` the only one and remove other 3.

```

MustID(b []byte) ObjectID

MustIDFromString(s string) ObjectID

NewID(b []byte) (ObjectID, error)

NewIDFromString(s string) (ObjectID, error)

```

Introduced the new interfrace method `ComputeHash` which will replace

the interface `HasherInterface`. Now we don't need to keep two

interfaces.

Reintroduced `git.NewIDFromString` and `git.MustIDFromString`. The new

function will detect the hash length to decide which objectformat of it.

If it's 40, then it's SHA1. If it's 64, then it's SHA256. This will be

right if the commitID is a full one. So the parameter should be always a

full commit id.

@AdamMajer Please review.

Update golang.org/x/crypto for CVE-2023-48795 and update other packages.

`go-git` is not updated because it needs time to figure out why some

tests fail.

- Remove `ObjectFormatID`

- Remove function `ObjectFormatFromID`.

- Use `Sha1ObjectFormat` directly but not a pointer because it's an

empty struct.

- Store `ObjectFormatName` in `repository` struct

Refactor Hash interfaces and centralize hash function. This will allow

easier introduction of different hash function later on.

This forms the "no-op" part of the SHA256 enablement patch.

## Changes

- Add deprecation warning to `Token` and `AccessToken` authentication

methods in swagger.

- Add deprecation warning header to API response. Example:

```

HTTP/1.1 200 OK

...

Warning: token and access_token API authentication is deprecated

...

```

- Add setting `DISABLE_QUERY_AUTH_TOKEN` to reject query string auth

tokens entirely. Default is `false`

## Next steps

- `DISABLE_QUERY_AUTH_TOKEN` should be true in a subsequent release and

the methods should be removed in swagger

- `DISABLE_QUERY_AUTH_TOKEN` should be removed and the implementation of

the auth methods in question should be removed

## Open questions

- Should there be further changes to the swagger documentation?

Deprecation is not yet supported for security definitions (coming in

[OpenAPI Spec version

3.2.0](https://github.com/OAI/OpenAPI-Specification/issues/2506))

- Should the API router logger sanitize urls that use `token` or

`access_token`? (This is obviously an insufficient solution on its own)

---------

Co-authored-by: delvh <dev.lh@web.de>

- Currently there's code to recover gracefully from panics that happen

within the execution of cron tasks. However this recover code wasn't

being run, because `RunWithShutdownContext` also contains code to

recover from any panic and then gracefully shutdown Forgejo. Because

`RunWithShutdownContext` registers that code as last, that would get run

first which in this case is not behavior that we want.

- Move the recover code to inside the function, so that is run first

before `RunWithShutdownContext`'s recover code (which is now a noop).

Fixes: https://codeberg.org/forgejo/forgejo/issues/1910

Co-authored-by: Gusted <postmaster@gusted.xyz>

Fix#28056

This PR will check whether the repo has zero branch when pushing a

branch. If that, it means this repository hasn't been synced.

The reason caused that is after user upgrade from v1.20 -> v1.21, he

just push branches without visit the repository user interface. Because

all repositories routers will check whether a branches sync is necessary

but push has not such check.

For every repository, it has two states, synced or not synced. If there

is zero branch for a repository, then it will be assumed as non-sync

state. Otherwise, it's synced state. So if we think it's synced, we just

need to update branch/insert new branch. Otherwise do a full sync. So

that, for every push, there will be almost no extra load added. It's

high performance than yours.

For the implementation, we in fact will try to update the branch first,

if updated success with affect records > 0, then all are done. Because

that means the branch has been in the database. If no record is

affected, that means the branch does not exist in database. So there are

two possibilities. One is this is a new branch, then we just need to

insert the record. Another is the branches haven't been synced, then we

need to sync all the branches into database.

The function `GetByBean` has an obvious defect that when the fields are

empty values, it will be ignored. Then users will get a wrong result

which is possibly used to make a security problem.

To avoid the possibility, this PR removed function `GetByBean` and all

references.

And some new generic functions have been introduced to be used.

The recommand usage like below.

```go

// if query an object according id

obj, err := db.GetByID[Object](ctx, id)

// query with other conditions

obj, err := db.Get[Object](ctx, builder.Eq{"a": a, "b":b})

```

- Push commits updates are run in a queue and updates can come from less

traceable places such as Git over SSH, therefor add more information

about on which repository the pushUpdate failed.

Refs: https://codeberg.org/forgejo/forgejo/pulls/1723

(cherry picked from commit 37ab9460394800678d2208fed718e719d7a5d96f)

Co-authored-by: Gusted <postmaster@gusted.xyz>

- Say to the binding middleware which locale should be used for the

required error.

- Resolves https://codeberg.org/forgejo/forgejo/issues/1683

(cherry picked from commit 5a2d7966127b5639332038e9925d858ab54fc360)

Co-authored-by: Gusted <postmaster@gusted.xyz>

Changed behavior to calculate package quota limit using package `creator

ID` instead of `owner ID`.

Currently, users are allowed to create an unlimited number of

organizations, each of which has its own package limit quota, resulting

in the ability for users to have unlimited package space in different

organization scopes. This fix will calculate package quota based on

`package version creator ID` instead of `package version owner ID`

(which might be organization), so that users are not allowed to take

more space than configured package settings.

Also, there is a side case in which users can publish packages to a

specific package version, initially published by different user, taking

that user package size quota. Version in fix should be better because

the total amount of space is limited to the quota for users sharing the

same organization scope.

Fixes https://codeberg.org/forgejo/forgejo/issues/1458

Some mails such as issue creation mails are missing the reply-to-comment

address. This PR fixes that and specifies which comment types should get

a reply-possibility.

Fixes#27819

We have support for two factor logins with the normal web login and with

basic auth. For basic auth the two factor check was implemented at three

different places and you need to know that this check is necessary. This

PR moves the check into the basic auth itself.

- On user deletion, delete action runners that the user has created.

- Add a database consistency check to remove action runners that have

nonexistent belonging owner.

- Resolves https://codeberg.org/forgejo/forgejo/issues/1720

(cherry picked from commit 009ca7223dab054f7f760b7ccae69e745eebfabb)

Co-authored-by: Gusted <postmaster@gusted.xyz>

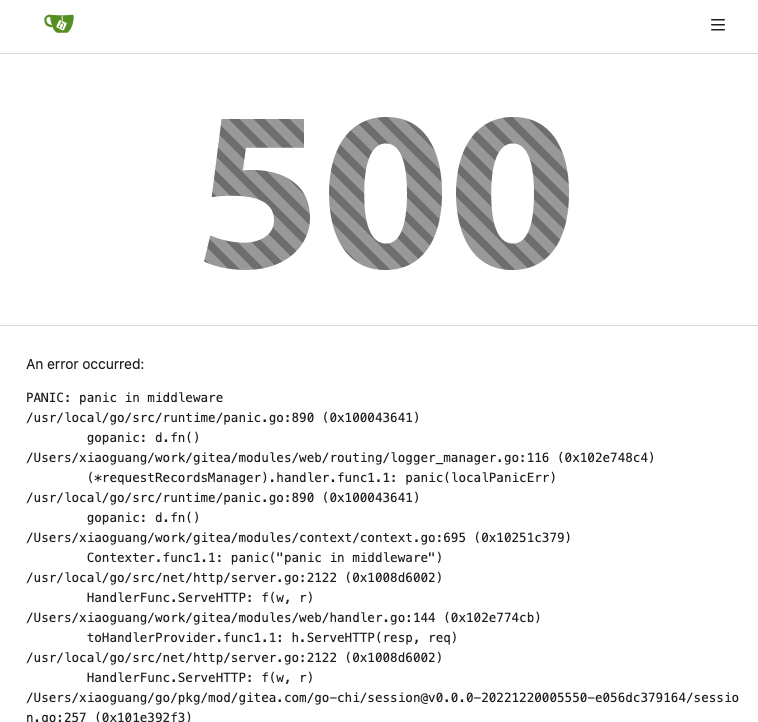

The steps to reproduce it.

First, create a new oauth2 source.

Then, a user login with this oauth2 source.

Disable the oauth2 source.

Visit users -> settings -> security, 500 will be displayed.

This is because this page only load active Oauth2 sources but not all

Oauth2 sources.

After many refactoring PRs for the "locale" and "template context

function", now the ".locale" is not needed for web templates any more.

This PR does a clean up for:

1. Remove `ctx.Data["locale"]` for web context.

2. Use `ctx.Locale` in `500.tmpl`, for consistency.

3. Add a test check for `500 page` locale usage.

4. Remove the `Str2html` and `DotEscape` from mail template context

data, they are copy&paste errors introduced by #19169 and #16200 . These

functions are template functions (provided by the common renderer), but

not template data variables.

5. Make email `SendAsync` function mockable (I was planning to add more

tests but it would make this PR much too complex, so the tests could be

done in another PR)

Due to a bug in the GitLab API, the diff_refs field is populated in the

response when fetching an individual merge request, but not when

fetching a list of them. That field is used to populate the merge base

commit SHA.

While there is detection for the merge base even when not populated by

the downloader, that detection is not flawless. Specifically, when a

GitLab merge request has a single commit, and gets merged with the

squash strategy, the base branch will be fast-forwarded instead of a

separate squash or merge commit being created. The merge base detection

attempts to find the last commit on the base branch that is also on the

PR branch, but in the fast-forward case that is the PR's only commit.

Assuming the head commit is also the merge base results in the import of

a PR with 0 commits and no diff.

This PR uses the individual merge request endpoint to fetch merge

request data with the diff_refs field. With its data, the base merge

commit can be properly set, which—by not relying on the detection

mentioned above—correctly imports PRs that were "merged" by

fast-forwarding the base branch.

ref: https://gitlab.com/gitlab-org/gitlab/-/issues/29620

Before this PR, the PR migration code populates Gitea's MergedCommitID

field by using GitLab's merge_commit_sha field. However, that field is

only populated when the PR was merged using a merge strategy. When a

squash strategy is used, squash_commit_sha is populated instead.

Given that Gitea does not keep track of merge and squash commits

separately, this PR simply populates Gitea's MergedCommitID by using

whichever field is present in the GitLab API response.

Hello there,

Cargo Index over HTTP is now prefered over git for package updates: we

should not force users who do not need the GIT repo to have the repo

created/updated on each publish (it can still be created in the packages

settings).

The current behavior when publishing is to check if the repo exist and

create it on the fly if not, then update it's content.

Cargo HTTP Index does not rely on the repo itself so this will be

useless for everyone not using the git protocol for cargo registry.

This PR only disable the creation on the fly of the repo when publishing

a crate.

This is linked to #26844 (error 500 when trying to publish a crate if

user is missing write access to the repo) because it's now optional.

---------

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

When `webhook.PROXY_URL` has been set, the old code will check if the

proxy host is in `ALLOWED_HOST_LIST` or reject requests through the

proxy. It requires users to add the proxy host to `ALLOWED_HOST_LIST`.

However, it actually allows all requests to any port on the host, when

the proxy host is probably an internal address.

But things may be even worse. `ALLOWED_HOST_LIST` doesn't really work

when requests are sent to the allowed proxy, and the proxy could forward

them to any hosts.

This PR fixes it by:

- If the proxy has been set, always allow connectioins to the host and

port.

- Check `ALLOWED_HOST_LIST` before forwarding.

Closes#27455

> The mechanism responsible for long-term authentication (the 'remember

me' cookie) uses a weak construction technique. It will hash the user's

hashed password and the rands value; it will then call the secure cookie

code, which will encrypt the user's name with the computed hash. If one

were able to dump the database, they could extract those two values to

rebuild that cookie and impersonate a user. That vulnerability exists

from the date the dump was obtained until a user changed their password.

>

> To fix this security issue, the cookie could be created and verified

using a different technique such as the one explained at

https://paragonie.com/blog/2015/04/secure-authentication-php-with-long-term-persistence#secure-remember-me-cookies.

The PR removes the now obsolete setting `COOKIE_USERNAME`.

assert.Fail() will continue to execute the code while assert.FailNow()

not. I thought those uses of assert.Fail() should exit immediately.

PS: perhaps it's a good idea to use

[require](https://pkg.go.dev/github.com/stretchr/testify/require)

somewhere because the assert package's default behavior does not exit

when an error occurs, which makes it difficult to find the root error

reason.

- Currently in the cron tasks, the 'Previous Time' only displays the

previous time of when the cron library executes the function, but not

any of the manual executions of the task.

- Store the last run's time in memory in the Task struct and use that,

when that time is later than time that the cron library has executed

this task.

- This ensures that if an instance admin manually starts a task, there's

feedback that this task is/has been run, because the task might be run

that quick, that the status icon already has been changed to an

checkmark,

- Tasks that are executed at startup now reflect this as well, as the

time of the execution of that task on startup is now being shown as

'Previous Time'.

- Added integration tests for the API part, which is easier to test

because querying the HTML table of cron tasks is non-trivial.

- Resolves https://codeberg.org/forgejo/forgejo/issues/949

(cherry picked from commit fd34fdac1408ece6b7d9fe6a76501ed9a45d06fa)

---------

Co-authored-by: Gusted <postmaster@gusted.xyz>

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

Co-authored-by: silverwind <me@silverwind.io>

This pull request is a minor code cleanup.

From the Go specification (https://go.dev/ref/spec#For_range):

> "1. For a nil slice, the number of iterations is 0."

> "3. If the map is nil, the number of iterations is 0."

`len` returns 0 if the slice or map is nil

(https://pkg.go.dev/builtin#len). Therefore, checking `len(v) > 0`

before a loop is unnecessary.

---

At the time of writing this pull request, there wasn't a lint rule that

catches these issues. The closest I could find is

https://staticcheck.dev/docs/checks/#S103

Signed-off-by: Eng Zer Jun <engzerjun@gmail.com>

With this PR we added the possibility to configure the Actions timeouts

values for killing tasks/jobs.

Particularly this enhancement is closely related to the `act_runner`

configuration reported below:

```

# The timeout for a job to be finished.

# Please note that the Gitea instance also has a timeout (3h by default) for the job.

# So the job could be stopped by the Gitea instance if it's timeout is shorter than this.

timeout: 3h

```

---

Setting the corresponding key in the INI configuration file, it is

possible to let jobs run for more than 3 hours.

Signed-off-by: Francesco Antognazza <francesco.antognazza@gmail.com>

- There's no need for `In` to be used, as it's a single parameter that's

being passed.

Refs: https://codeberg.org/forgejo/forgejo/pulls/1521

(cherry picked from commit 4a4955f43ae7fc50cfe3b48409a0a10c82625a19)

Co-authored-by: Gusted <postmaster@gusted.xyz>

When the user does not set a username lookup condition, LDAP will get an

empty string `""` for the user, hence the following code

```

if isExist, err := user_model.IsUserExist(db.DefaultContext, 0, sr.Username)

```

The user presence determination will always be nonexistent, so updates

to user information will never be performed.

Fix#27049

Part of #27065

This PR touches functions used in templates. As templates are not static

typed, errors are harder to find, but I hope I catch it all. I think

some tests from other persons do not hurt.

Blank Issues should be enabled if they are not explicit disabled through

the `blank_issues_enabled` field of the Issue Config. The Implementation

has currently a Bug: If you create a Issue Config file with only

`contact_links` and without a `blank_issues_enabled` field,

`blank_issues_enabled` is set to false by default.

The fix is only one line, but I decided to also improve the tests to

make sure there are no other problems with the Implementation.

This is a bugfix, so it should be backported to 1.20.

This PR removed `unittest.MainTest` the second parameter

`TestOptions.GiteaRoot`. Now it detects the root directory by current

working directory.

---------

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

I noticed, that the push mirrors endpoint, is the only endpoint which

returns the times in long format rather than as time.Time().

I think the behavior should be consistent across the project.

----

## ⚠️ BREAKING ⚠️

This PR changes the time format used in API responses for all

push_mirror endpoints which return a push mirror.

---------

Co-authored-by: Giteabot <teabot@gitea.io>

Refs: https://codeberg.org/forgejo/forgejo/pulls/1385

Signed-off-by: Lars Lehtonen <lars.lehtonen@gmail.com>

(cherry picked from commit 589e7d346f51de4a0e2c461b220c8cad34133b2f)

Co-authored-by: Lars Lehtonen <lars.lehtonen@gmail.com>

This PR adds a new field `RemoteAddress` to both mirror types which

contains the sanitized remote address for easier (database) access to

that information. Will be used in the audit PR if merged.

Part of #27065

This reduces the usage of `db.DefaultContext`. I think I've got enough

files for the first PR. When this is merged, I will continue working on

this.

Considering how many files this PR affect, I hope it won't take to long

to merge, so I don't end up in the merge conflict hell.

---------

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Fix#26723

Add `ChangeDefaultBranch` to the `notifier` interface and implement it

in `indexerNotifier`. So when changing the default branch,

`indexerNotifier` sends a message to the `indexer queue` to update the

index.

---------

Co-authored-by: techknowlogick <matti@mdranta.net>

Currently, Artifact does not have an expiration and automatic cleanup

mechanism, and this feature needs to be added. It contains the following

key points:

- [x] add global artifact retention days option in config file. Default

value is 90 days.

- [x] add cron task to clean up expired artifacts. It should run once a

day.

- [x] support custom retention period from `retention-days: 5` in

`upload-artifact@v3`.

- [x] artifacts link in actions view should be non-clickable text when

expired.

Just like `models/unittest`, the testing helper functions should be in a

separate package: `contexttest`

And complete the TODO:

> // TODO: move this function to other packages, because it depends on

"models" package

Cargo registry-auth feature requires config.json to have a property

auth-required set to true in order to send token to all registry

requests.

This is ok for git index because you can manually edit the config.json

file to add the auth-required, but when using sparse

(setting index url to

"sparse+https://git.example.com/api/packages/{owner}/cargo/"), the

config.json is dynamically rendered, and does not reflect changes to the

config.json file in the repo.

I see two approaches:

- Serve the real config.json file when fetching the config.json on the

cargo service.

- Automatically detect if the registry requires authorization. (This is

what I implemented in this PR).

What the PR does:

- When a cargo index repository is created, on the config.json, set

auth-required to wether or not the repository is private.

- When the cargo/config.json endpoint is called, set auth-required to

wether or not the request was authorized using an API token.

The web context (modules/context.Context) is quite complex, it's

difficult for the callers to initialize correctly.

This PR introduces a `NewWebContext` function, to make sure the web

context have the same behavior for different cases.

- Resolves https://codeberg.org/forgejo/forgejo/issues/580

- Return a `upload_field` to any release API response, which points to

the API URL for uploading new assets.

- Adds unit test.

- Adds integration testing to verify URL is returned correctly and that

upload endpoint actually works

---------

Co-authored-by: Gusted <postmaster@gusted.xyz>

Replace #22751

1. only support the default branch in the repository setting.

2. autoload schedule data from the schedule table after starting the

service.

3. support specific syntax like `@yearly`, `@monthly`, `@weekly`,

`@daily`, `@hourly`

## How to use

See the [GitHub Actions

document](https://docs.github.com/en/actions/using-workflows/events-that-trigger-workflows#schedule)

for getting more detailed information.

```yaml

on:

schedule:

- cron: '30 5 * * 1,3'

- cron: '30 5 * * 2,4'

jobs:

test_schedule:

runs-on: ubuntu-latest

steps:

- name: Not on Monday or Wednesday

if: github.event.schedule != '30 5 * * 1,3'

run: echo "This step will be skipped on Monday and Wednesday"

- name: Every time

run: echo "This step will always run"

```

Signed-off-by: Bo-Yi.Wu <appleboy.tw@gmail.com>

---------

Co-authored-by: Jason Song <i@wolfogre.com>

Co-authored-by: techknowlogick <techknowlogick@gitea.io>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

- Add a new `CreateSecretOption` struct for creating secrets

- Implement a `CreateOrgSecret` function to create a secret in an

organization

- Add a new route in `api.go` to handle the creation of organization

secrets

- Update the Swagger template to include the new `CreateOrgSecret` API

endpoint

---------

Signed-off-by: appleboy <appleboy.tw@gmail.com>

## Archived labels

This adds the structure to allow for archived labels.

Archived labels are, just like closed milestones or projects, a medium to hide information without deleting it.

It is especially useful if there are outdated labels that should no longer be used without deleting the label entirely.

## Changes

1. UI and API have been equipped with the support to mark a label as archived

2. The time when a label has been archived will be stored in the DB

## Outsourced for the future

There's no special handling for archived labels at the moment.

This will be done in the future.

## Screenshots

Part of https://github.com/go-gitea/gitea/issues/25237

---------

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Fix#26129

Replace #26258

This PR will introduce a transaction on creating pull request so that if

some step failed, it will rollback totally. And there will be no dirty

pull request exist.

---------

Co-authored-by: Giteabot <teabot@gitea.io>

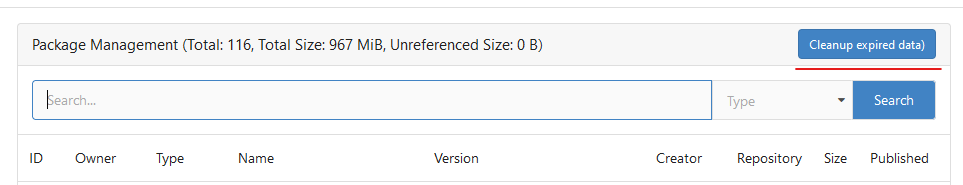

Until now expired package data gets deleted daily by a cronjob. The

admin page shows the size of all packages and the size of unreferenced

data. The users (#25035, #20631) expect the deletion of this data if

they run the cronjob from the admin page but the job only deletes data

older than 24h.

This PR adds a new button which deletes all expired data.

---------

Co-authored-by: silverwind <me@silverwind.io>

Follow #25229

Copy from

https://github.com/go-gitea/gitea/pull/26290#issuecomment-1663135186

The bug is that we cannot get changed files for the

`pull_request_target` event. This event runs in the context of the base

branch, so we won't get any changes if we call

`GetFilesChangedSinceCommit` with `PullRequest.Base.Ref`.

I noticed that `issue_service.CreateComment` adds transaction operations

on `issues_model.CreateComment`, we can merge the two functions and we

can avoid calling each other's methods in the `services` layer.

Co-authored-by: Giteabot <teabot@gitea.io>

- The user renaming function has zero test coverage.

- This patch brings that up to speed to test for various scenarios and

ensure that in a normal workflow the correct things has changed to their

respective new value. Most scenarios are to ensure certain things DO NOT

happen.

(cherry picked from commit 5b9d34ed115c9ef24012b8027959ea0afdcb4e2d)

Refs: https://codeberg.org/forgejo/forgejo/pulls/1156

Co-authored-by: Gusted <postmaster@gusted.xyz>

- Just to get 100% coverage on services/wiki/wiki_path.go, nothing

special. This is just an formality.

(cherry picked from commit 6b3528920fbf18c41d6aeb95498af48443282370)

Refs: https://codeberg.org/forgejo/forgejo/pulls/1156

Co-authored-by: Gusted <postmaster@gusted.xyz>

In the original implementation, we can only get the first 30 records of

the commit status (the default paging size), if the commit status is

more than 30, it will lead to the bug #25990. I made the following two

changes.

- On the page, use the ` db.ListOptions{ListAll: true}` parameter

instead of `db.ListOptions{}`

- The `GetLatestCommitStatus` function makes a determination as to

whether or not a pager is being used.

fixed#25990

The API should only return the real Mail of a User, if the caller is

logged in. The check do to this don't work. This PR fixes this. This not

really a security issue, but can lead to Spam.

---------

Co-authored-by: silverwind <me@silverwind.io>

Attemp fix: #25744

Fixing the log level when we delete any repo then we get error hook not

found by id. That should be warn level to reduce the noise in the logs.

---------

Co-authored-by: delvh <dev.lh@web.de>

- The `NoBetterThan` function can only handle comparisons between

"pending," "success," "error," and "failure." For any other comparison,

we directly return false. This prevents logic errors like the one in

#26121.

- The callers of the `NoBetterThan` function should also avoid making

incomparable calls.

---------

Co-authored-by: yp05327 <576951401@qq.com>

Co-authored-by: puni9869 <80308335+puni9869@users.noreply.github.com>

This PR

- Fix#26093. Replace `time.Time` with `timeutil.TimeStamp`

- Fix#26135. Add missing `xorm:"extends"` to `CountLFSMetaObject` for

LFS meta object query

- Add a unit test for LFS meta object garbage collection

- cancel running jobs if the event is push

- Add a new function `CancelRunningJobs` to cancel all running jobs of a

run

- Update `FindRunOptions` struct to include `Ref` field and update its

condition in `toConds` function

- Implement auto cancellation of running jobs in the same workflow in

`notify` function

related task: https://github.com/go-gitea/gitea/pull/22751/

---------

Signed-off-by: Bo-Yi Wu <appleboy.tw@gmail.com>

Signed-off-by: appleboy <appleboy.tw@gmail.com>

Co-authored-by: Jason Song <i@wolfogre.com>

Co-authored-by: delvh <dev.lh@web.de>

Replace `github.com/gogs/cron` with `github.com/go-co-op/gocron` as the

former package is not maintained for many years.

---------

Co-authored-by: delvh <dev.lh@web.de>

To avoid deadlock problem, almost database related functions should be

have ctx as the first parameter.

This PR do a refactor for some of these functions.

The version listed in rpm repodata should only contain the rpm version

(1.0.0) and not the combination of version and release (1.0.0-2). We

correct this behaviour in primary.xml.gz, filelists.xml.gz and

others.xml.gz.

Signed-off-by: Peter Verraedt <peter@verraedt.be>

Fix#25776. Close#25826.

In the discussion of #25776, @wolfogre's suggestion was to remove the

commit status of `running` and `warning` to keep it consistent with

github.

references:

-

https://docs.github.com/en/rest/commits/statuses?apiVersion=2022-11-28#about-commit-statuses

## ⚠️ BREAKING ⚠️

So the commit status of Gitea will be consistent with GitHub, only

`pending`, `success`, `error` and `failure`, while `warning` and

`running` are not supported anymore.

---------

Co-authored-by: Jason Song <i@wolfogre.com>

Bumping `github.com/golang-jwt/jwt` from v4 to v5.

`github.com/golang-jwt/jwt` v5 is bringing some breaking changes:

- standard `Valid()` method on claims is removed. It's replaced by

`ClaimsValidator` interface implementing `Validator()` method instead,

which is called after standard validation. Gitea doesn't seem to be

using this logic.

- `jwt.Token` has a field `Valid`, so it's checked in `ParseToken`

function in `services/auth/source/oauth2/token.go`

---------

Co-authored-by: Giteabot <teabot@gitea.io>

Before: the concept "Content string" is used everywhere. It has some

problems:

1. Sometimes it means "base64 encoded content", sometimes it means "raw

binary content"

2. It doesn't work with large files, eg: uploading a 1G LFS file would

make Gitea process OOM

This PR does the refactoring: use "ContentReader" / "ContentBase64"

instead of "Content"

This PR is not breaking because the key in API JSON is still "content":

`` ContentBase64 string `json:"content"` ``

Related issue: #18368

It doesn't seem right to "guess" the file encoding/BOM when using API to

upload files.

The API should save the uploaded content as-is.

we refactored `userIDFromToken` for the token parsing part into a new

function `parseToken`. `parseToken` returns the string `token` from

request, and a boolean `ok` representing whether the token exists or

not. So we can distinguish between token non-existence and token

inconsistency in the `verfity` function, thus solving the problem of no

proper error message when the token is inconsistent.

close#24439

related #22119

---------

Co-authored-by: Jason Song <i@wolfogre.com>

Co-authored-by: Giteabot <teabot@gitea.io>

Follow #25229

At present, when the trigger event is `pull_request_target`, the `ref`

and `sha` of `ActionRun` are set according to the base branch of the

pull request. This makes it impossible for us to find the head branch of

the `ActionRun` directly. In this PR, the `ref` and `sha` will always be

set to the head branch and they will be changed to the base branch when

generating the task context.

Remove unnecessary `if opts.Logger != nil` checks.

* For "CLI doctor" mode, output to the console's "logger.Info".

* For "Web Task" mode, output to the default "logger.Debug", to avoid

flooding the server's log in a busy production instance.

Co-authored-by: Giteabot <teabot@gitea.io>

Fixes#24723

Direct serving of content aka HTTP redirect is not mentioned in any of

the package registry specs but lots of official registries do that so it

should be supported by the usual clients.

When branch's commit CommitMessage is too long, the column maybe too

short.(TEXT 16K for mysql).

This PR will fix it to only store the summary because these message will

only show on branch list or possible future search?

Related #14180

Related #25233

Related #22639Close#19786

Related #12763

This PR will change all the branches retrieve method from reading git

data to read database to reduce git read operations.

- [x] Sync git branches information into database when push git data

- [x] Create a new table `Branch`, merge some columns of `DeletedBranch`

into `Branch` table and drop the table `DeletedBranch`.

- [x] Read `Branch` table when visit `code` -> `branch` page

- [x] Read `Branch` table when list branch names in `code` page dropdown

- [x] Read `Branch` table when list git ref compare page

- [x] Provide a button in admin page to manually sync all branches.

- [x] Sync branches if repository is not empty but database branches are

empty when visiting pages with branches list

- [x] Use `commit_time desc` as the default FindBranch order by to keep

consistent as before and deleted branches will be always at the end.

---------

Co-authored-by: Jason Song <i@wolfogre.com>

Fix#25451.

Bugfixes:

- When stopping the zombie or endless tasks, set `LogInStorage` to true

after transferring the file to storage. It was missing, it could write

to a nonexistent file in DBFS because `LogInStorage` was false.

- Always update `ActionTask.Updated` when there's a new state reported

by the runner, even if there's no change. This is to avoid the task

being judged as a zombie task.

Enhancement:

- Support `Stat()` for DBFS file.

- `WriteLogs` refuses to write if it could result in content holes.

---------

Co-authored-by: Giteabot <teabot@gitea.io>

Fix#25088

This PR adds the support for

[`pull_request_target`](https://docs.github.com/en/actions/using-workflows/events-that-trigger-workflows#pull_request_target)

workflow trigger. `pull_request_target` is similar to `pull_request`,

but the workflow triggered by the `pull_request_target` event runs in

the context of the base branch of the pull request rather than the head

branch. Since the workflow from the base is considered trusted, it can

access the secrets and doesn't need approvals to run.

this will allow us to fully localize it later

PS: we can not migrate back as the old value was a one-way conversion

prepare for #25213

---

*Sponsored by Kithara Software GmbH*

In modern days, there is no reason to make users set "charset" anymore.

Close#25378

## ⚠️ BREAKING

The key `[database].CHARSET` was removed completely as every newer

(>10years) MySQL database supports `utf8mb4` already.

There is a (deliberately) undocumented new fallback option if anyone

still needs to use it, but we don't recommend using it as it simply

causes problems.

Fix#21072

Username Attribute is not a required item when creating an

authentication source. If Username Attribute is empty, the username

value of LDAP user cannot be read, so all users from LDAP will be marked

as inactive by mistake when synchronizing external users.

This PR improves the sync logic, if username is empty, the email address

will be used to find user.

1. The "web" package shouldn't depends on "modules/context" package,

instead, let each "web context" register themselves to the "web"

package.

2. The old Init/Free doesn't make sense, so simplify it

* The ctx in "Init(ctx)" is never used, and shouldn't be used that way

* The "Free" is never called and shouldn't be called because the SSPI

instance is shared

---------

Co-authored-by: Giteabot <teabot@gitea.io>

Follow up #22405Fix#20703

This PR rewrites storage configuration read sequences with some breaks

and tests. It becomes more strict than before and also fixed some

inherit problems.

- Move storage's MinioConfig struct into setting, so after the

configuration loading, the values will be stored into the struct but not

still on some section.

- All storages configurations should be stored on one section,

configuration items cannot be overrided by multiple sections. The

prioioty of configuration is `[attachment]` > `[storage.attachments]` |

`[storage.customized]` > `[storage]` > `default`

- For extra override configuration items, currently are `SERVE_DIRECT`,

`MINIO_BASE_PATH`, `MINIO_BUCKET`, which could be configured in another

section. The prioioty of the override configuration is `[attachment]` >

`[storage.attachments]` > `default`.

- Add more tests for storages configurations.

- Update the storage documentations.

---------

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

close#24540

related:

- Protocol: https://gitea.com/gitea/actions-proto-def/pulls/9

- Runner side: https://gitea.com/gitea/act_runner/pulls/201

changes:

- Add column of `labels` to table `action_runner`, and combine the value

of `agent_labels` and `custom_labels` column to `labels` column.

- Store `labels` when registering `act_runner`.

- Update `labels` when `act_runner` starting and calling `Declare`.

- Users cannot modify the `custom labels` in edit page any more.

other changes:

- Store `version` when registering `act_runner`.

- If runner is latest version, parse version from `Declare`. But older

version runner still parse version from request header.

The plan is that all built-in auth providers use inline SVG for more

flexibility in styling and to get the GitHub icon to follow

`currentcolor`. This only removes the `public/img/auth` directory and

adds the missing svgs to our svg build.

It should map the built-in providers to these SVGs and render them. If

the user has set a Icon URL, it should render that as an `img` tag

instead.

```

gitea-azure-ad

gitea-bitbucket

gitea-discord

gitea-dropbox

gitea-facebook

gitea-gitea

gitea-gitlab

gitea-google

gitea-mastodon

gitea-microsoftonline

gitea-nextcloud

gitea-twitter

gitea-yandex

octicon-mark-github

```

GitHub logo is now white again on dark theme:

<img width="431" alt="Screenshot 2023-06-12 at 21 45 34"

src="https://github.com/go-gitea/gitea/assets/115237/27a43504-d60a-4132-a502-336b25883e4d">

---------

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Extract from #22743

`DeleteBranch` will trigger a push update event, so that

`pull_service.CloseBranchPulls` has been invoked twice and

`AddDeletedBranch` is better to be moved to push update then even user

delete a branch via git command, it will also be triggered.

Co-authored-by: Giteabot <teabot@gitea.io>

## Changes

- Adds the following high level access scopes, each with `read` and

`write` levels:

- `activitypub`

- `admin` (hidden if user is not a site admin)

- `misc`

- `notification`

- `organization`

- `package`

- `issue`

- `repository`

- `user`

- Adds new middleware function `tokenRequiresScopes()` in addition to

`reqToken()`

- `tokenRequiresScopes()` is used for each high-level api section

- _if_ a scoped token is present, checks that the required scope is

included based on the section and HTTP method

- `reqToken()` is used for individual routes

- checks that required authentication is present (but does not check

scope levels as this will already have been handled by

`tokenRequiresScopes()`

- Adds migration to convert old scoped access tokens to the new set of

scopes

- Updates the user interface for scope selection

### User interface example

<img width="903" alt="Screen Shot 2023-05-31 at 1 56 55 PM"

src="https://github.com/go-gitea/gitea/assets/23248839/654766ec-2143-4f59-9037-3b51600e32f3">

<img width="917" alt="Screen Shot 2023-05-31 at 1 56 43 PM"

src="https://github.com/go-gitea/gitea/assets/23248839/1ad64081-012c-4a73-b393-66b30352654c">

## tokenRequiresScopes Design Decision

- `tokenRequiresScopes()` was added to more reliably cover api routes.

For an incoming request, this function uses the given scope category

(say `AccessTokenScopeCategoryOrganization`) and the HTTP method (say

`DELETE`) and verifies that any scoped tokens in use include

`delete:organization`.

- `reqToken()` is used to enforce auth for individual routes that

require it. If a scoped token is not present for a request,

`tokenRequiresScopes()` will not return an error

## TODO

- [x] Alphabetize scope categories

- [x] Change 'public repos only' to a radio button (private vs public).

Also expand this to organizations

- [X] Disable token creation if no scopes selected. Alternatively, show

warning

- [x] `reqToken()` is missing from many `POST/DELETE` routes in the api.

`tokenRequiresScopes()` only checks that a given token has the correct

scope, `reqToken()` must be used to check that a token (or some other

auth) is present.

- _This should be addressed in this PR_

- [x] The migration should be reviewed very carefully in order to

minimize access changes to existing user tokens.

- _This should be addressed in this PR_

- [x] Link to api to swagger documentation, clarify what

read/write/delete levels correspond to

- [x] Review cases where more than one scope is needed as this directly

deviates from the api definition.

- _This should be addressed in this PR_

- For example:

```go

m.Group("/users/{username}/orgs", func() {

m.Get("", reqToken(), org.ListUserOrgs)

m.Get("/{org}/permissions", reqToken(), org.GetUserOrgsPermissions)

}, tokenRequiresScopes(auth_model.AccessTokenScopeCategoryUser,

auth_model.AccessTokenScopeCategoryOrganization),

context_service.UserAssignmentAPI())

```

## Future improvements

- [ ] Add required scopes to swagger documentation

- [ ] Redesign `reqToken()` to be opt-out rather than opt-in

- [ ] Subdivide scopes like `repository`

- [ ] Once a token is created, if it has no scopes, we should display

text instead of an empty bullet point

- [ ] If the 'public repos only' option is selected, should read

categories be selected by default

Closes#24501Closes#24799

Co-authored-by: Jonathan Tran <jon@allspice.io>

Co-authored-by: Kyle D <kdumontnu@gmail.com>

Co-authored-by: silverwind <me@silverwind.io>

This PR creates an API endpoint for creating/updating/deleting multiple

files in one API call similar to the solution provided by

[GitLab](https://docs.gitlab.com/ee/api/commits.html#create-a-commit-with-multiple-files-and-actions).

To archive this, the CreateOrUpdateRepoFile and DeleteRepoFIle functions

in files service are unified into one function supporting multiple files

and actions.

Resolves#14619

Before there was a "graceful function": RunWithShutdownFns, it's mainly

for some modules which doesn't support context.

The old queue system doesn't work well with context, so the old queues

need it.

After the queue refactoring, the new queue works with context well, so,

use Golang context as much as possible, the `RunWithShutdownFns` could

be removed (replaced by RunWithCancel for context cancel mechanism), the

related code could be simplified.

This PR also fixes some legacy queue-init problems, eg:

* typo : archiver: "unable to create codes indexer queue" => "unable to

create repo-archive queue"

* no nil check for failed queues, which causes unfriendly panic

After this PR, many goroutines could have better display name:

This PR replaces all string refName as a type `git.RefName` to make the

code more maintainable.

Fix#15367

Replaces #23070

It also fixed a bug that tags are not sync because `git remote --prune

origin` will not remove local tags if remote removed.

We in fact should use `git fetch --prune --tags origin` but not `git

remote update origin` to do the sync.

Some answer from ChatGPT as ref.

> If the git fetch --prune --tags command is not working as expected,

there could be a few reasons why. Here are a few things to check:

>

>Make sure that you have the latest version of Git installed on your

system. You can check the version by running git --version in your

terminal. If you have an outdated version, try updating Git and see if

that resolves the issue.

>

>Check that your Git repository is properly configured to track the

remote repository's tags. You can check this by running git config

--get-all remote.origin.fetch and verifying that it includes

+refs/tags/*:refs/tags/*. If it does not, you can add it by running git

config --add remote.origin.fetch "+refs/tags/*:refs/tags/*".

>

>Verify that the tags you are trying to prune actually exist on the

remote repository. You can do this by running git ls-remote --tags

origin to list all the tags on the remote repository.

>

>Check if any local tags have been created that match the names of tags

on the remote repository. If so, these local tags may be preventing the

git fetch --prune --tags command from working properly. You can delete

local tags using the git tag -d command.

---------

Co-authored-by: delvh <dev.lh@web.de>

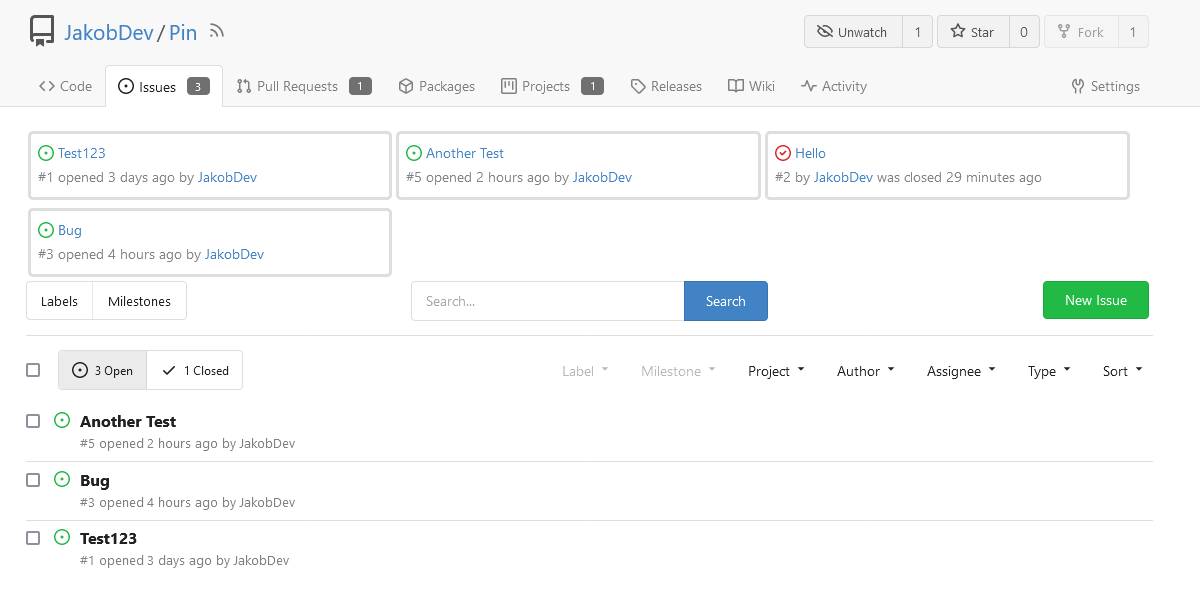

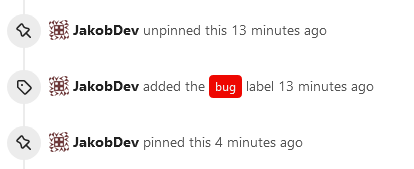

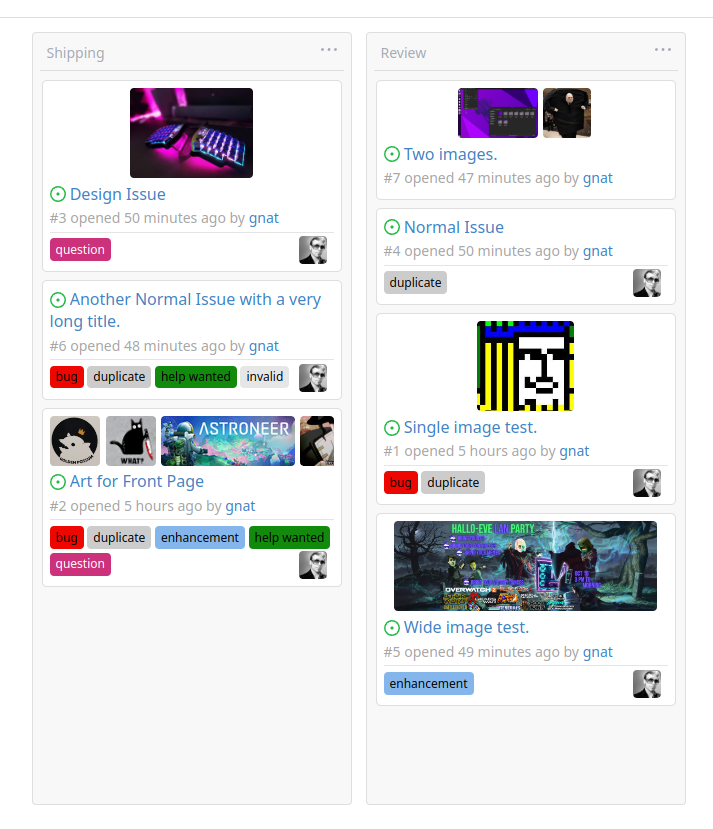

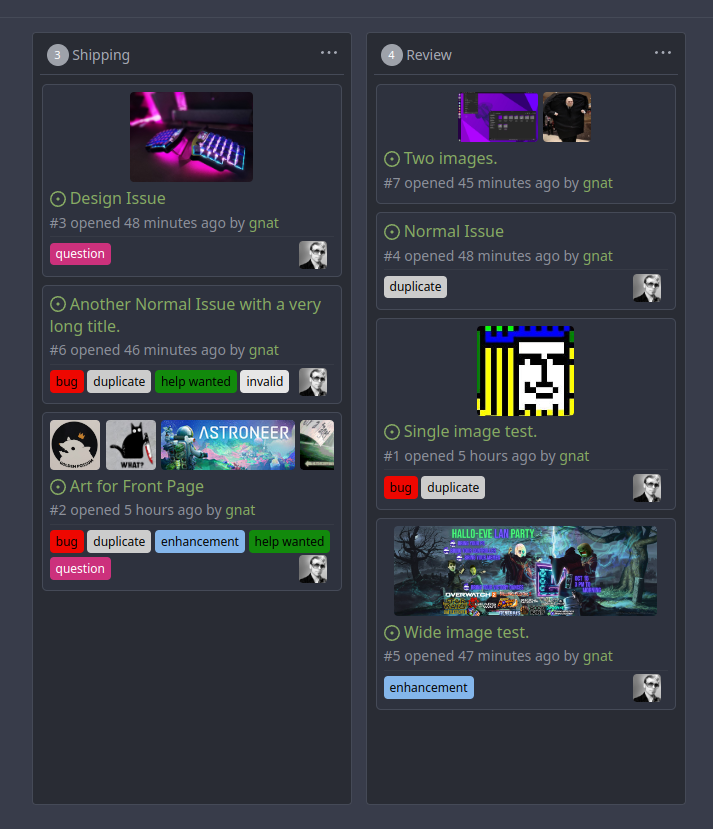

This adds the ability to pin important Issues and Pull Requests. You can

also move pinned Issues around to change their Position. Resolves#2175.

## Screenshots

The Design was mostly copied from the Projects Board.

## Implementation

This uses a new `pin_order` Column in the `issue` table. If the value is

set to 0, the Issue is not pinned. If it's set to a bigger value, the

value is the Position. 1 means it's the first pinned Issue, 2 means it's

the second one etc. This is dived into Issues and Pull requests for each

Repo.

## TODO

- [x] You can currently pin as many Issues as you want. Maybe we should

add a Limit, which is configurable. GitHub uses 3, but I prefer 6, as

this is better for bigger Projects, but I'm open for suggestions.

- [x] Pin and Unpin events need to be added to the Issue history.

- [x] Tests

- [x] Migration

**The feature itself is currently fully working, so tester who may find

weird edge cases are very welcome!**

---------

Co-authored-by: silverwind <me@silverwind.io>

Co-authored-by: Giteabot <teabot@gitea.io>

close https://github.com/go-gitea/gitea/issues/16321

Provided a webhook trigger for requesting someone to review the Pull

Request.

Some modifications have been made to the returned `PullRequestPayload`

based on the GitHub webhook settings, including:

- add a description of the current reviewer object as

`RequestedReviewer` .

- setting the action to either **review_requested** or

**review_request_removed** based on the operation.

- adding the `RequestedReviewers` field to the issues_model.PullRequest.

This field will be loaded into the PullRequest through

`LoadRequestedReviewers()` when `ToAPIPullRequest` is called.

After the Pull Request is merged, I will supplement the relevant

documentation.

Fix#24856

Rename "context.contextKey" to "context.WebContextKey", this context is

for web context only. But the Context itself is not renamed, otherwise

it would cause a lot of changes (if we really want to rename it, there

could be a separate PR).

The old test code doesn't really test, the "install page" gets broken

not only one time, so use new test code to make sure the "install page"

could work.

Use `default_merge_message/REBASE_TEMPLATE.md` for amending the message

of the last commit in the list of commits that was merged. Previously

this template was mentioned in the documentation but not actually used.

In this template additional variables `CommitTitle` and `CommitBody` are

available, for the title and body of the commit.

Ideally the message of every commit would be updated using the template,

but doing an interactive rebase or merging commits one by one is

complicated, so that is left as a future improvement.

Replace #20257 (which is stale and incomplete)

Close#20255

Major changes:

* Deprecate the "WHITELIST", use "ALLOWLIST"

* Add wildcard support for EMAIL_DOMAIN_ALLOWLIST/EMAIL_DOMAIN_BLOCKLIST

* Update example config file and document

* Improve tests

## ⚠️ Breaking

The `log.<mode>.<logger>` style config has been dropped. If you used it,

please check the new config manual & app.example.ini to make your

instance output logs as expected.

Although many legacy options still work, it's encouraged to upgrade to

the new options.

The SMTP logger is deleted because SMTP is not suitable to collect logs.

If you have manually configured Gitea log options, please confirm the

logger system works as expected after upgrading.

## Description

Close#12082 and maybe more log-related issues, resolve some related

FIXMEs in old code (which seems unfixable before)

Just like rewriting queue #24505 : make code maintainable, clear legacy

bugs, and add the ability to support more writers (eg: JSON, structured

log)

There is a new document (with examples): `logging-config.en-us.md`

This PR is safer than the queue rewriting, because it's just for

logging, it won't break other logic.

## The old problems

The logging system is quite old and difficult to maintain:

* Unclear concepts: Logger, NamedLogger, MultiChannelledLogger,

SubLogger, EventLogger, WriterLogger etc

* Some code is diffuclt to konw whether it is right:

`log.DelNamedLogger("console")` vs `log.DelNamedLogger(log.DEFAULT)` vs

`log.DelLogger("console")`

* The old system heavily depends on ini config system, it's difficult to

create new logger for different purpose, and it's very fragile.

* The "color" trick is difficult to use and read, many colors are

unnecessary, and in the future structured log could help

* It's difficult to add other log formats, eg: JSON format

* The log outputer doesn't have full control of its goroutine, it's

difficult to make outputer have advanced behaviors

* The logs could be lost in some cases: eg: no Fatal error when using

CLI.

* Config options are passed by JSON, which is quite fragile.

* INI package makes the KEY in `[log]` section visible in `[log.sub1]`

and `[log.sub1.subA]`, this behavior is quite fragile and would cause

more unclear problems, and there is no strong requirement to support

`log.<mode>.<logger>` syntax.

## The new design

See `logger.go` for documents.

## Screenshot

<details>

</details>

## TODO

* [x] add some new tests

* [x] fix some tests

* [x] test some sub-commands (manually ....)

---------

Co-authored-by: Jason Song <i@wolfogre.com>

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: Giteabot <teabot@gitea.io>

This PR is a refactor at the beginning. And now it did 4 things.

- [x] Move renaming organizaiton and user logics into services layer and

merged as one function

- [x] Support rename a user capitalization only. For example, rename the

user from `Lunny` to `lunny`. We just need to change one table `user`

and others should not be touched.

- [x] Before this PR, some renaming were missed like `agit`

- [x] Fix bug the API reutrned from `http.StatusNoContent` to `http.StatusOK`

Replace #16455Close#21803

Mixing different Gitea contexts together causes some problems:

1. Unable to respond proper content when error occurs, eg: Web should

respond HTML while API should respond JSON

2. Unclear dependency, eg: it's unclear when Context is used in

APIContext, which fields should be initialized, which methods are

necessary.

To make things clear, this PR introduces a Base context, it only

provides basic Req/Resp/Data features.

This PR mainly moves code. There are still many legacy problems and

TODOs in code, leave unrelated changes to future PRs.

This PR

- [x] Move some functions from `issues.go` to `issue_stats.go` and

`issue_label.go`

- [x] Remove duplicated issue options `UserIssueStatsOption` to keep

only one `IssuesOptions`

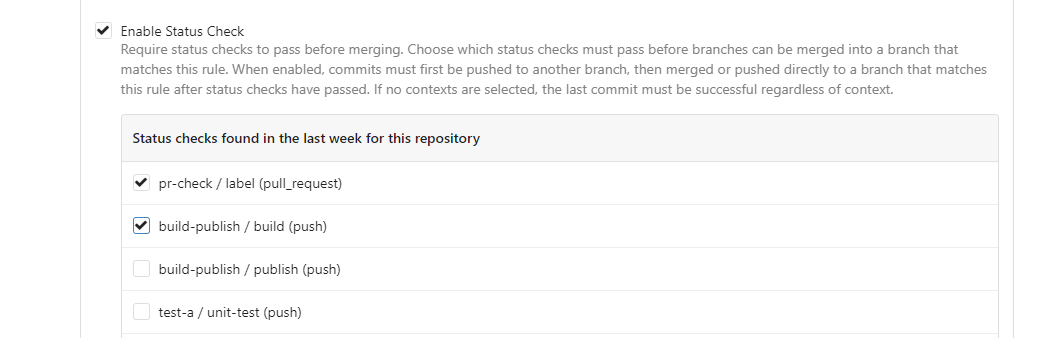

This PR is to allow users to specify status checks by patterns. Users

can enter patterns in the "Status Check Pattern" `textarea` to match

status checks and each line specifies a pattern. If "Status Check" is

enabled, patterns cannot be empty and user must enter at least one

pattern.

Users will no longer be able to choose status checks from the table. But

a __*`Matched`*__ mark will be added to the matched checks to help users

enter patterns.

Benefits:

- Even if no status checks have been completed, users can specify

necessary status checks in advance.

- More flexible. Users can specify a series of status checks by one

pattern.

Before:

After:

---------

Co-authored-by: silverwind <me@silverwind.io>

Co-author: @pboguslawski

"registration success email" is only used for notifying a user that "you

have a new account now" when the account is created by admin manually.

When a user uses external auth source, they already knows that they has

the account, so do not send such email.

Co-authored-by: Giteabot <teabot@gitea.io>

The `GetAllCommits` endpoint can be pretty slow, especially in repos

with a lot of commits. The issue is that it spends a lot of time

calculating information that may not be useful/needed by the user.

The `stat` param was previously added in #21337 to address this, by

allowing the user to disable the calculating stats for each commit. But

this has two issues:

1. The name `stat` is rather misleading, because disabling `stat`

disables the Stat **and** Files. This should be separated out into two

different params, because getting a list of affected files is much less

expensive than calculating the stats

2. There's still other costly information provided that the user may not

need, such as `Verification`

This PR, adds two parameters to the endpoint, `files` and `verification`

to allow the user to explicitly disable this information when listing

commits. The default behavior is true.

1. Remove unused fields/methods in web context.

2. Make callers call target function directly instead of the light

wrapper like "IsUserRepoReaderSpecific"

3. The "issue template" code shouldn't be put in the "modules/context"

package, so move them to the service package.

---------

Co-authored-by: Giteabot <teabot@gitea.io>

# ⚠️ Breaking

Many deprecated queue config options are removed (actually, they should

have been removed in 1.18/1.19).

If you see the fatal message when starting Gitea: "Please update your

app.ini to remove deprecated config options", please follow the error

messages to remove these options from your app.ini.

Example:

```

2023/05/06 19:39:22 [E] Removed queue option: `[indexer].ISSUE_INDEXER_QUEUE_TYPE`. Use new options in `[queue.issue_indexer]`

2023/05/06 19:39:22 [E] Removed queue option: `[indexer].UPDATE_BUFFER_LEN`. Use new options in `[queue.issue_indexer]`

2023/05/06 19:39:22 [F] Please update your app.ini to remove deprecated config options

```

Many options in `[queue]` are are dropped, including:

`WRAP_IF_NECESSARY`, `MAX_ATTEMPTS`, `TIMEOUT`, `WORKERS`,

`BLOCK_TIMEOUT`, `BOOST_TIMEOUT`, `BOOST_WORKERS`, they can be removed

from app.ini.

# The problem

The old queue package has some legacy problems:

* complexity: I doubt few people could tell how it works.

* maintainability: Too many channels and mutex/cond are mixed together,

too many different structs/interfaces depends each other.

* stability: due to the complexity & maintainability, sometimes there

are strange bugs and difficult to debug, and some code doesn't have test

(indeed some code is difficult to test because a lot of things are mixed

together).

* general applicability: although it is called "queue", its behavior is

not a well-known queue.

* scalability: it doesn't seem easy to make it work with a cluster

without breaking its behaviors.

It came from some very old code to "avoid breaking", however, its

technical debt is too heavy now. It's a good time to introduce a better

"queue" package.

# The new queue package

It keeps using old config and concept as much as possible.

* It only contains two major kinds of concepts:

* The "base queue": channel, levelqueue, redis

* They have the same abstraction, the same interface, and they are

tested by the same testing code.

* The "WokerPoolQueue", it uses the "base queue" to provide "worker

pool" function, calls the "handler" to process the data in the base

queue.

* The new code doesn't do "PushBack"

* Think about a queue with many workers, the "PushBack" can't guarantee

the order for re-queued unhandled items, so in new code it just does

"normal push"

* The new code doesn't do "pause/resume"

* The "pause/resume" was designed to handle some handler's failure: eg:

document indexer (elasticsearch) is down

* If a queue is paused for long time, either the producers blocks or the

new items are dropped.

* The new code doesn't do such "pause/resume" trick, it's not a common

queue's behavior and it doesn't help much.

* If there are unhandled items, the "push" function just blocks for a

few seconds and then re-queue them and retry.

* The new code doesn't do "worker booster"

* Gitea's queue's handlers are light functions, the cost is only the

go-routine, so it doesn't make sense to "boost" them.

* The new code only use "max worker number" to limit the concurrent

workers.

* The new "Push" never blocks forever

* Instead of creating more and more blocking goroutines, return an error

is more friendly to the server and to the end user.

There are more details in code comments: eg: the "Flush" problem, the

strange "code.index" hanging problem, the "immediate" queue problem.

Almost ready for review.

TODO:

* [x] add some necessary comments during review

* [x] add some more tests if necessary

* [x] update documents and config options

* [x] test max worker / active worker

* [x] re-run the CI tasks to see whether any test is flaky

* [x] improve the `handleOldLengthConfiguration` to provide more

friendly messages

* [x] fine tune default config values (eg: length?)

## Code coverage:

Close#24213

Replace #23830

#### Cause

- Before, in order to making PR can get latest commit after reopening,

the `ref`(${REPO_PATH}/refs/pull/${PR_INDEX}/head) of evrey closed PR

will be updated when pushing commits to the `head branch` of the closed

PR.

#### Changes

- For closed PR , won't perform these behavior: insert`comment`, push

`notification` (UI and email), exectue

[pushToBaseRepo](7422503341/services/pull/pull.go (L409))

function and trigger `action` any more when pushing to the `head branch`

of the closed PR.

- Refresh the reference of the PR when reopening the closed PR (**even

if the head branch has been deleted before**). Make the reference of PR

consistent with the `head branch`.

The `..` should be covered by TestUserTitleToWebPath.

Otherwise, if the random string is "..", it causes unnecessary failure

in TestUserWebGitPathConsistency

Since the login form label for user_name unconditionally displays

`Username or Email Address` for the `user_name` field, bring matching

LDAP filters to more prominence in the documentation/placeholders.

Signed-off-by: Gary Moon <gary@garymoon.net>

Partially for #24457

Major changes:

1. The old `signedUserNameStringPointerKey` is quite hacky, use

`ctx.Data[SignedUser]` instead

2. Move duplicate code from `Contexter` to `CommonTemplateContextData`

3. Remove incorrect copying&pasting code `ctx.Data["Err_Password"] =

true` in API handlers

4. Use one unique `RenderPanicErrorPage` for panic error page rendering

5. Move `stripSlashesMiddleware` to be the first middleware

6. Install global panic recovery handler, it works for both `install`

and `web`

7. Make `500.tmpl` only depend minimal template functions/variables,

avoid triggering new panics

Screenshot:

<details>

</details>

Add ntlm authentication support for mail

use "github.com/Azure/go-ntlmssp"

---------

Co-authored-by: yangtan_win <YangTan@Fitsco.com.cn>

Co-authored-by: silverwind <me@silverwind.io>

Co-authored-by: @awkwardbunny

This PR adds a Debian package registry. You can follow [this

tutorial](https://www.baeldung.com/linux/create-debian-package) to build

a *.deb package for testing. Source packages are not supported at the

moment and I did not find documentation of the architecture "all" and

how these packages should be treated.

---------

Co-authored-by: Brian Hong <brian@hongs.me>

Co-authored-by: techknowlogick <techknowlogick@gitea.io>

There was only one `IsRepositoryExist` function, it did: `has && isDir`

However it's not right, and it would cause 500 error when creating a new

repository if the dir exists.

Then, it was changed to `has || isDir`, it is still incorrect, it

affects the "adopt repo" logic.

To make the logic clear:

* IsRepositoryModelOrDirExist

* IsRepositoryModelExist

Fix https://github.com/go-gitea/gitea/pull/24362/files#r1179095324

`getAuthenticatedMeta` has checked them, these code are duplicated one.

And the first invokation has a wrong permission check. `DownloadHandle`

should require read permission but not write.

> The scoped token PR just checked all API routes but in fact, some web

routes like `LFS`, git `HTTP`, container, and attachments supports basic

auth. This PR added scoped token check for them.

---------

Signed-off-by: jolheiser <john.olheiser@gmail.com>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

The Infinite Monkey Random Typing catches a bug, inconsistent wiki path

converting.

Close#24276

Co-authored-by: silverwind <me@silverwind.io>

Co-authored-by: Giteabot <teabot@gitea.io>

- Update all tool dependencies to latest tag

- Remove unused errcheck, it is part of golangci-lint

- Include main.go in air

- Enable wastedassign again now that it's

[generics-compatible](https://github.com/golangci/golangci-lint/pull/3689)

- Restructured lint targets to new `lint-*` namespace

Before, there was a `log/buffer.go`, but that design is not general, and

it introduces a lot of irrelevant `Content() (string, error) ` and

`return "", fmt.Errorf("not supported")` .

And the old `log/buffer.go` is difficult to use, developers have to

write a lot of `Contains` and `Sleep` code.

The new `LogChecker` is designed to be a general approach to help to

assert some messages appearing or not appearing in logs.

Close#24195

Some of the changes are taken from my another fix

f07b0de997

in #20147 (although that PR was discarded ....)

The bug is:

1. The old code doesn't handle `removedfile` event correctly

2. The old code doesn't provide attachments for type=CommentTypeReview

This PR doesn't intend to refactor the "upload" code to a perfect state

(to avoid making the review difficult), so some legacy styles are kept.

---------

Co-authored-by: silverwind <me@silverwind.io>

Co-authored-by: Giteabot <teabot@gitea.io>

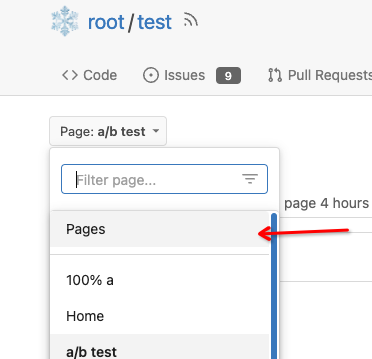

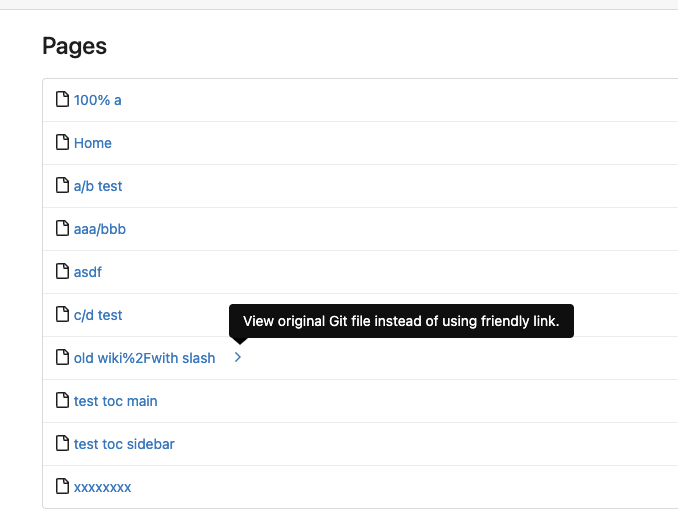

Close#7570

1. Clearly define the wiki path behaviors, see

`services/wiki/wiki_path.go` and tests

2. Keep compatibility with old contents

3. Allow to use dashes in titles, eg: "2000-01-02 Meeting record"

4. Add a "Pages" link in the dropdown, otherwise users can't go to the

Pages page easily.

5. Add a "View original git file" link in the Pages list, even if some

file names are broken, users still have a chance to edit or remove it,

without cloning the wiki repo to local.

6. Fix 500 error when the name contains prefix spaces.

This PR also introduces the ability to support sub-directories, but it

can't be done at the moment due to there are a lot of legacy wiki data,

which use "%2F" in file names.

Co-authored-by: Giteabot <teabot@gitea.io>

This allows for usernames, and emails connected to them to be reserved

and not reused.

Use case, I manage an instance with open registration, and sometimes

when users are deleted for spam (or other purposes), their usernames are

freed up and they sign up again with the same information.

This could also be used to reserve usernames, and block them from being

registered (in case an instance would like to block certain things

without hardcoding the list in code and compiling from scratch).

This is an MVP, that will allow for future work where you can set

something as reserved via the interface.

---------

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: John Olheiser <john.olheiser@gmail.com>

Close#24062

At the beginning, I just wanted to fix the warning mentioned by #24062

But, the cookie code really doesn't look good to me, so clean up them.

Complete the TODO on `SetCookie`:

> TODO: Copied from gitea.com/macaron/macaron and should be improved

after macaron removed.

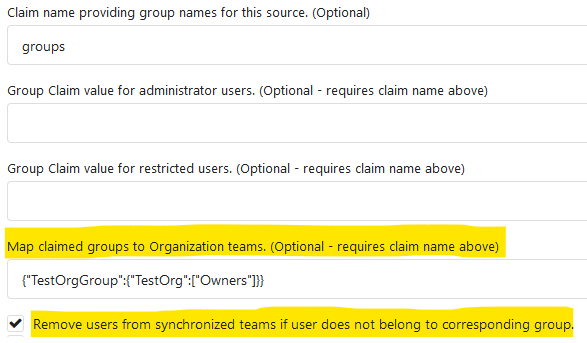

In the `for` loop, the value of `membershipsToAdd[org]` and

`membershipsToRemove[org]` is a slice that should be appended instead of

overwritten.

Due to the current overwrite, the LDAP group sync only matches the last

group at the moment.

## Example reproduction

- an LDAP user is both a member of

`cn=admin_staff,ou=people,dc=planetexpress,dc=com` and

`cn=ship_crew,ou=people,dc=planetexpress,dc=com`.

- configuration of `Map LDAP groups to Organization teams ` in

`Authentication Sources`:

```json

{

"cn=admin_staff,ou=people,dc=planetexpress,dc=com":{

"test_organization":[

"admin_staff",

"test_add"

]

},

"cn=ship_crew,ou=people,dc=planetexpress,dc=com":{

"test_organization":[

"ship_crew"

]

}

```

- start `Synchronize external user data` task in the `Dashboard`.

- the user was only added for the team `test_organization.ship_crew`

None of the features of `unrolled/render` package is used.

The Golang builtin "html/template" just works well. Then we can improve

our HTML render to resolve the "$.root.locale.Tr" problem as much as

possible.

Next step: we can have a template render pool (by Clone), then we can

inject global functions with dynamic context to every `Execute` calls.

Then we can use `{{Locale.Tr ....}}` directly in all templates , no need

to pass the `$.root.locale` again and again.

Currently using the tip of main

(2c585d62a4) and when deleting a branch

(and presumably tag, but not tested), no workflows with `on: [delete]`

are being triggered. The runner isn't being notified about them. I see

this in the gitea log:

`2023/04/04 04:29:36 ...s/notifier_helper.go:102:Notify() [E] an error

occurred while executing the NotifyDeleteRef actions method:

gitRepo.GetCommit: object does not exist [id: test, rel_path: ]`

Understandably the ref has already been deleted and so `GetCommit`

fails. Currently at

https://github.com/go-gitea/gitea/blob/main/services/actions/notifier_helper.go#L130,

if the ref is an empty string it falls back to the default branch name.

This PR also checks if it is a `HookEventDelete` and does the same.

Currently `${{ github.ref }}` would be equivalent to the deleted branch

(if `notify()` succeded), but this PR allows `notify()` to proceed and

also aligns it with the GitHub Actions behavior at

https://docs.github.com/en/actions/using-workflows/events-that-trigger-workflows#delete: